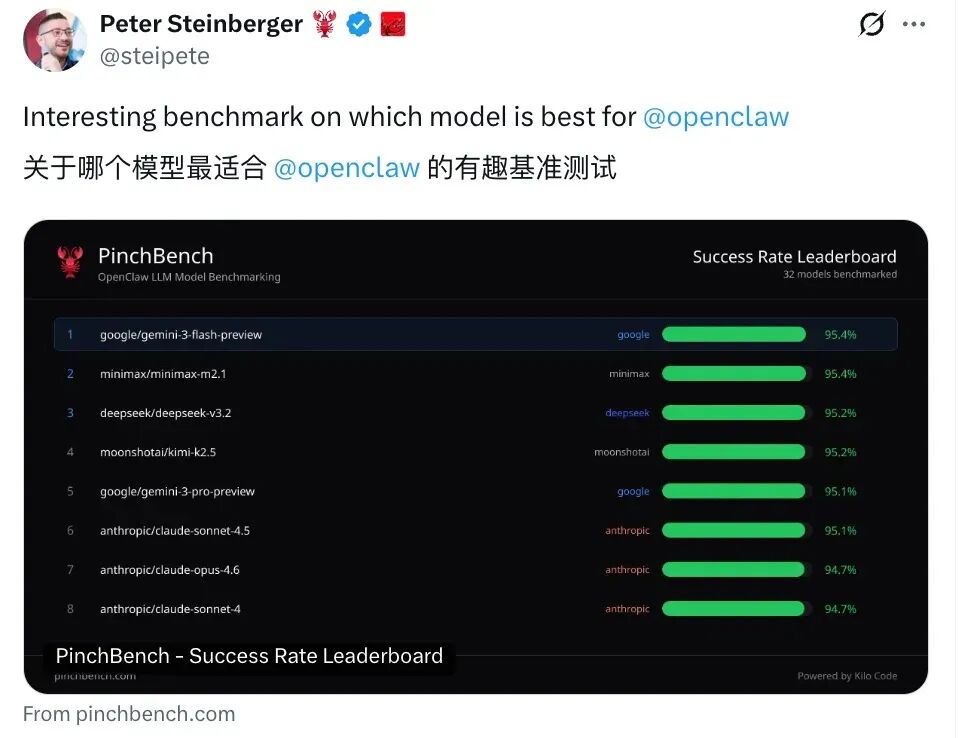

March 9th, yesterday, to evaluate the big language model OpenClaw Performance of the mandatebenchmarking PinchBench officially came out and tested 32 of the main mainstream models for a one-time, horizontal comparison of success, speed and cost。

In the success dimension, Google's Gemini 3 Flash Preview ranked first with 95.1% success。

As a "light version" of the Gemini series, the performance went beyond its own flagship Gemini 3 Pro (91.71 TP3T) and overcame Claude Sonet 4.5 (92.71 TP3T) and GPT-4o (85.21 TP3T)。

The performance of the national production model was equally bright, with MiniMax M2.1 ranked second in the success rate of 93.6%, and Kimi K2.5 followed by 93.4%, with two national production models co-taking two of the top three seats worldwide。

Anthropic flagship model Claude Opus 4.6 The success rate was only 90.6%, ranked seventh, behind the multi-media end model。

In terms of speed, MiniMax M2.5 completes the entire test in 105.96 seconds, leading by 0.09 seconds by the second-named Gemini 2.0 Flash, to the speed champion。

By contrast, Claude Sonet 4 took 137.66 seconds, while Gemini 3 Pro was as high as 239.55 seconds, about twice as long as a champion。

On a cost dimension, GPT-5 Nano became the minimum price option for the field at $0.03 per assignment, with a success rate of 85.8%。

Gemini 2.5 Flash Lite follows with a success rate of USD 0.05, 83.2%. Claude Opus 4.6 completed the test at a cost of $5.89, nearly 200 times the GPT-5 Nano, but the success rate was lower than Mini Max M2.1 by more than 3 percentage points。

PinchBench's rating mechanisms include code running validation (automated inspection), quality assessment (with Claude Opus as judge) and a combination of three ways in which all topics and answers are available to GitHub. The full list can be found in pinchbench.com。