Yesterday.Pepsi modelThe team officially launched the first flagship non-thinking model of the Ling 2.0 series - Ling-1T.

It was described that the model was based on the Ling 2.0 architecture and had a total parameter size of 1T, each token activated about 50B parameters and completed pre-training on high-quality language above 20T token to support the highest 128K context window。

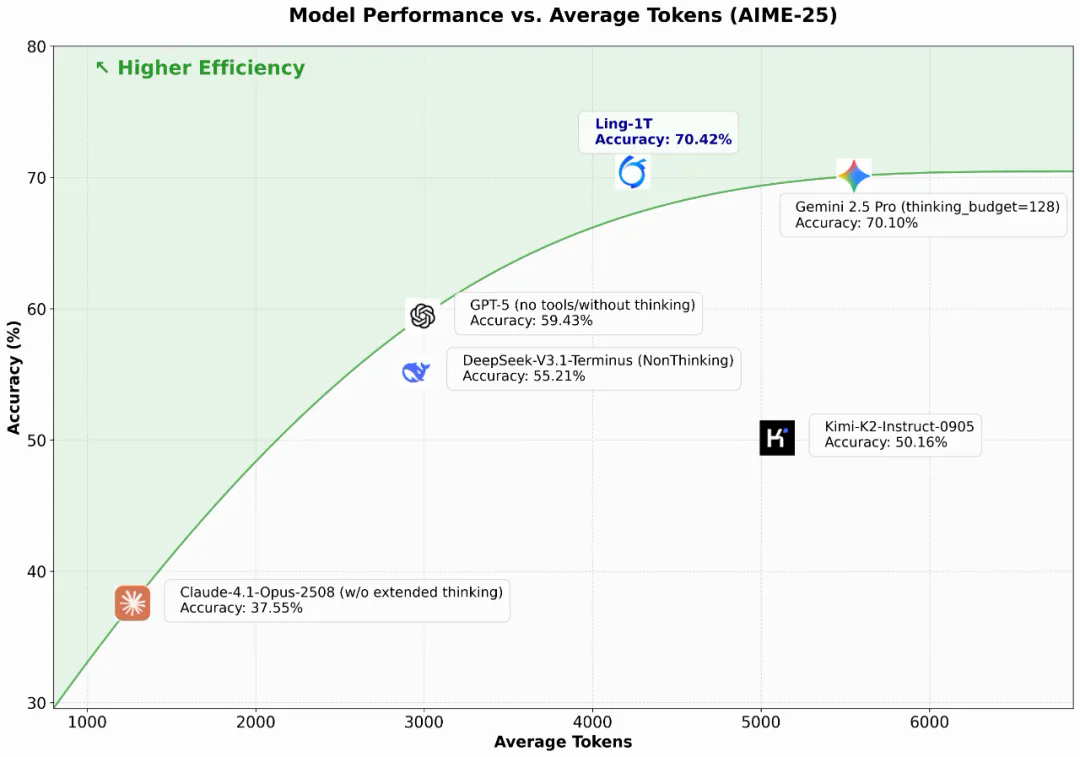

According to the official presentation, Ling-1T has demonstrated a leading advantage over multiple open and closed-source flagship models in a number of difficult reference tests, including code generation, software development, competitive mathematics, and logical reasoning。

In addition, Ling-1T displays a strong ability to migrate and extend in a smart tool call, and even without the introduction of a large number of operational tracks, the call accuracy of approximately 70% can be achieved by fine-tuning only a few commands. The team indicated that these capabilities constitute a key foundation for universal intelligence。

Ling-1T is currently available on platforms like HuggingFace, ModelScope, GitHub, and can be downloaded by domestic and foreign developers。

HuggingFace: https://huggingface.co/inclusionAI/Ling-1T

👾ModelScope: https://modelsscope.cn/models/inclusionAI/Ling-1T

💻 GitHub: https://github.com/inclusionAI/Ling-V2