February 27, according to 9to5Google, Google will launch a new API interface in Android to achieve similar "Bean bag"Let AI Agent manipulate the app function。

According to the Android official document, AppFunctions is the platform capability of Android 16 and is supported by the Jetpack library, which allows developers to structurally expose the specific functionality in application to AI Agent。

DEVELOPER CAN GENERATE THE NECESSARY CODE BY ANNOTATED, METADATA AND KSP COMPILER TO ENABLE Gemini Waiting for the AI Agent application to perform a direct mission in the back of the device without having to jump-on the application interface。

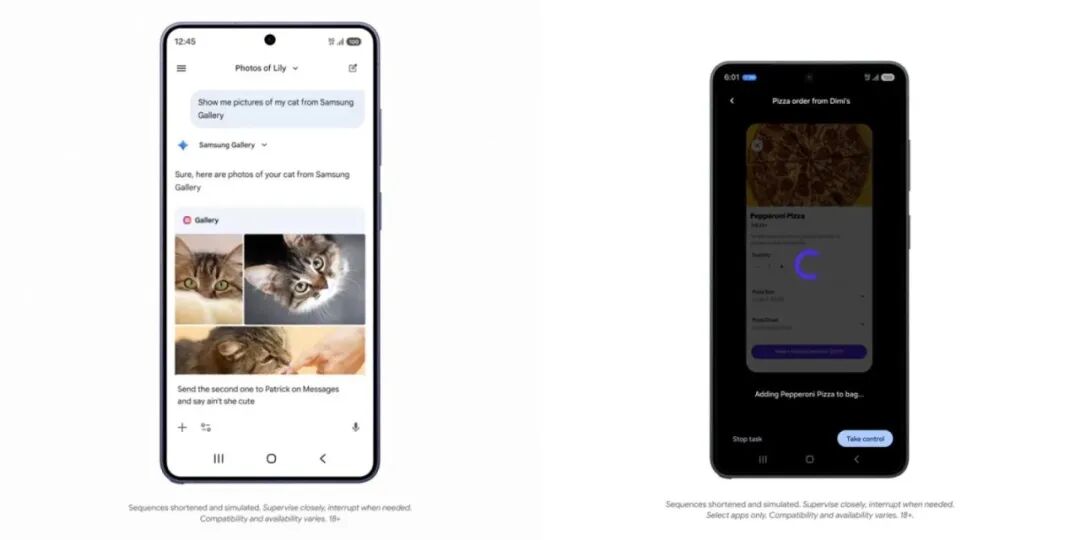

At the launch of the Samsung Galaxy S26 series yesterday, Google showed the actual landing of AppFunctions: All users need to say to Gemini is "see me a picture of Samsung Tuculi." The system automatically calls the library function and returns the result directly, without having to open the application manually。

This feature will be the first to go online with the Galaxy S26 and Pixel 10 series in the United States and Korea, initially to support only part of the off-sale, fresh and Internet-based vehicle applications, and will be pushed to additional three-star equipment along with OneUI 8.5。

Nobiya Managing Director Neft said yesterday in Webbo that Samsung's combination with Google, while showing the ability to automate mobile phones, was still a "local capacity" and did not reach the "bean bag phone." The Nubia M153 "All-Scene System Level" auto-driving AI experience。

HE STRESSED THAT NUBIAN HAD TAKEN THE LEAD LAST YEAR IN LAUNCHING A BEAN BAG TECHNOLOGY PREVIEW THAT WOULD FACILITATE THE USE OF CELL PHONE-END AI INTELLIGENTS INTO SYSTEM-LEVEL DEPTH APPLICATIONS, AND LOOKED FORWARD TO MORE MANUFACTURERS JOINING IN TO IMPROVE THE COVERAGE AND DEPTH OF LANDING。