March 19th news, yesterday, the dark side of the moon Kimi FounderYang Chik LunThe keynote speech " How We Scaled Kimi K2.5 " , delivered at the GTC 2026 Congress in Wevinda, systematically revealed for the first time a complete technology road map for Kimi, re-engineered around three sub-structures:

MuonClip Optimizer(a) For the Adam Optimizer, which has been in use since 2014, the team introduced the Newton-Schulz iterative and QK-Clip mechanisms based on the Muon Optimizer, which solved the Logits blast in the hundreds of billions of parameter-scale trainings and achieved two times the computing efficiency of the traditional Adam W

Kimi Linear: THE HYBRID LINEAR FOCUS STRUCTURE BASED ON THE KDA STRUCTURE CHALLENGES THE PRACTICE THAT "ALL LAYERS MUST USE FULL ATTENTION" BY INCREASING THE DECODER SPEED BY 5 TO 6 TIMES IN THE 128K AND 1M SUPER-LONG CONTEXT SCENARIO

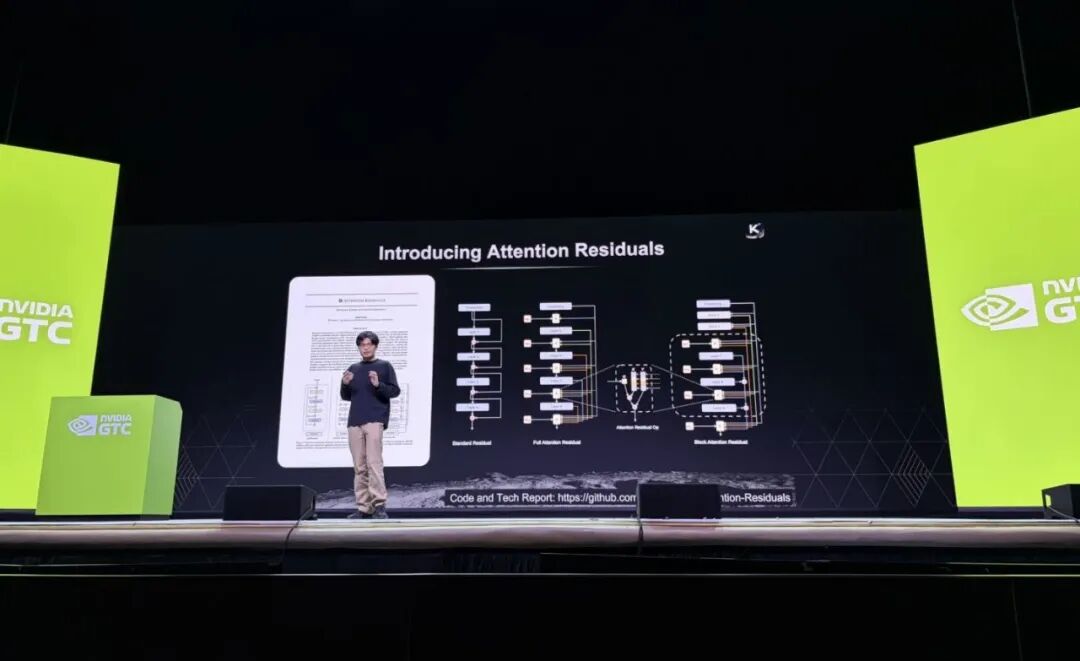

Organisation: For a residual connection that continues for 10 years, the traditional equation is replaced by a cross layer of Softmax attention, allowing each layer to extract information proactively and selectively from the front。

Among them, the release of the Attention Reviews has generated widespread interest in the industry: the Mask review called "an impressive" and the former co-founder of OpenAI, Karpathy, "it seems that we have not understood "Attention is All You Need" literally, and the main inventor of OpenAI o1 called it the beginning of "Deep Learning 2.0"。

Yang Shih stated that Kimi would adhere to the open source path by contributing to open source communities the bottom-level innovations of Muonclip, Kimi Linear and Attention Resics。