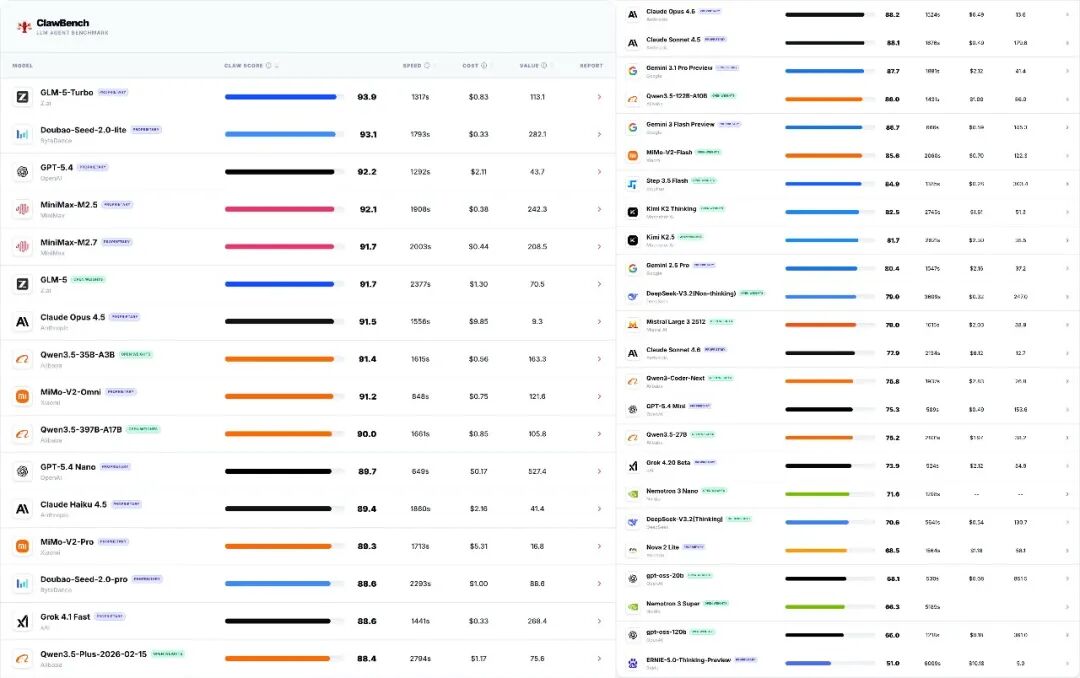

31 March, Agent Evaluation Agency ClawBench It was released yesterdayLarge ModelChecklist, covering 30 complex Agent missions, covering five core business scenarios of office collaboration, information retrieval, content creation, data processing and software engineering。

This list includes over 40 major mainstream models, and the top 10 of the world's top four national production models, i.e., spectra, byte, and millimetres。

GLM-5-Turbo, with 93.9 points of CLAW SCORE at the top of the list, is the most highly performing model of the evaluation

Byte beat Doubao-Seed-2.0-lite is second in 93.1 with only $0.33, the lowest in the list

MiMo-V2-Omni is ranked 9th in 91.2 and runs at the fastest speed and takes only 848 seconds to complete the full task flow。

From the overall list, OpenAI GPT-54 ranks third in 92.2, Claude Opus 4.5 ranks seventh in 91.5, and Ali Qwen3.5-35B-A3B stands eighth in 91.4。

ClawBench uses a sandbox enforcement mechanism, where each model is designed to perform its tasks in a genuinely simulated business development environment and deliberately embeds engineering challenges such as "unsatisfactory name " "missing directory" "date trap"。

In terms of scoring, ClawBench introduced a “triple scoring mechanism” with automated script assertions based on the type of task, front-line LLM acting as “expert assessor” and a mixed rating that combines the weighting of the two, with a view to more accurately reflecting the actual deployment capacity of the model in a complex workflow。