Today share a basisSeedance 2.0AI VideoProduction ProcessSo, to a certain extent, it solves the problem of Feedance 2.0, which is not able to pass on a simulation of the details of the person, and seeks to maintain the consistency of the person。

Tools/models to be used in this process:

Picture model: Banana Pro

Video model: Feedance 2.0+ model of a traditional front frame model (using Feedance 1.5 pro in this case)

Tools: Dream+LibTV (here can be replaced with other tools, as long as you can use the above model, you can choose according to your preferences)

NOTE: IT IS IMPORTANT TO ENSURE THAT THE ACTUAL MATERIAL USED IS AI-GENERATED AND DOES NOT USE THE ACTUAL IMAGE AS A REFERENCE。

Process 1: Video mainline content generation

The most important focus of this segment is on the preparation of indicators and reference materials。

REFERENCE MATERIAL: ROLE MAPS (GENERATED BY SCREENSHOT/AI), SCENE MAPS (GENERATED BY BANANA MODELS), REFERENCE VIDEOS (PRECEDENT DRAMA VIDEOS)

Phrasing structure: Reference object + image content [subject behaviour (persons, actions, emoticons, etc.) + lens (seek, perspective + scene) + overall constraint (style, sound, image elements, etc.)

Specific hints:

[A video with hostess@chart I, Script Fantasy Video Realism, True Hostage of the Movie, The cliffs of the cold mountains, the sense of visual shock and oppression, backgroundless music, only blizzards of snow and dragons. @Figure @Figure II, using an overlooking mirror warrior, standing by the cliff, under a vast abyss of cloudy sky, faraway dragon @Figure @Figure #3 flew to the hostess #2: Switching the spectroscope, with the hostess picturing with a thrust mirror, reflecting the stressful and tenacious look of the hostess, and the sense of greatness and oppression that saw the reality; Image #Figure III, with the hostess riding on the dragon @Figure III, flying from the cliffs, from the top to the bottom, and following with the dragon and the hostess. It reflects the depth of the cliff, the depth of the feeling. There is a need to switch to a spectroscope and different perspectives to make the whole picture more rhythmic and film-sensitive, to produce only the sound of the environment and not the background music. _

[As a follow-up to @previous video, a video of the Spicy War, a cloudy video, followed by a deep abyss, a strong sense of visual shock and oppression, backgroundless music, only blizzards and dragons. Image 1: The hostess continues to fly to the abyss mist on her cycling mirrors to the bottom and to the top, following her on her cycling. All of a sudden, a very large European dragon @ Picture 1 appears before the hostess (the dragon is very big, hundreds of metres tall, and the big blue glowing dragon's eyes are as big as the peak of the cliffs around it. It's a huge, unrealistic feeling of oppression; it's the second image of the woman's main face, with the propulsive mirror, and the stressful and tenacious expression of the woman's face. We need to use the spectroscopy and the different angles to make the whole picture more rhythmic and film-sensitive, to generate only the sound of the environment, not the background music]

Generate Tips:

- 3-4 times of a period of 15 seconds for the first reminder

- Selection of a video that best fits the scene as a reference video for the second segment; other footage could be used as a follow-up lens

- Generate a second video with the reference video that has just been selected. It is also suggested that a hint be generated three to four times, which can be combined with a short set of events。

TO COMPLETE THE ABOVE STEPS, AN AI VIDEO IS ALMOST COMPLETE. BUT IT IS NOT PARTICULARLY GOOD TO KEEP THE CHARACTER TOGETHER, BECAUSE WHEN WE PASS THE ROLE REFERENCE, IT IS IMPOSSIBLE TO SEND THE HIGH-RESOLUTION OR EVEN THE ROLE MAP, BUT ONLY TO REFER TO THE PERSON IN THE VIDEO。

The first image of the person generated

The follow-up process is an appropriate remedy for this problem。

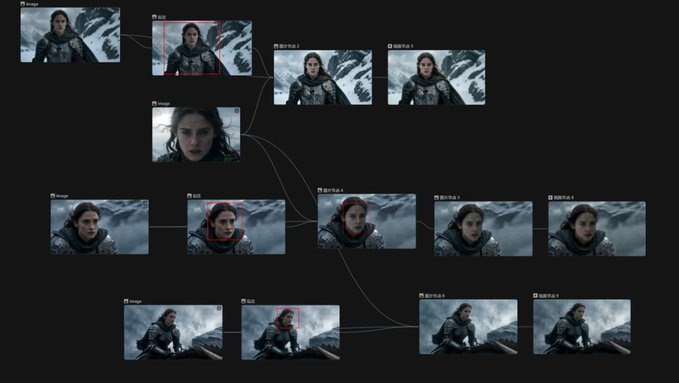

Process II: Face replacement

1. View the first step of Feedance 2.0, which is a direct film, and select those features that are not returned。

2. Intercept key frames as reference maps for the second living map。

3. INTERCEPT THE FACE OF THE MAIN CHARACTER (HERE ALSO THE DETAILS OF THE PERSON ' S FACE GENERATED BY AI) AS A REFERENCE MAP

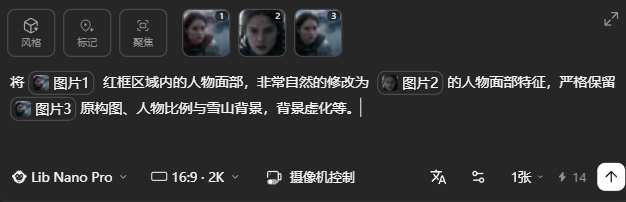

4. select a banana pro model, enter a hint:

[Reform the face of the person in the red box area of figure 1 very naturally to the face of the person in figure 2 with strict retention of the original figure 3, the proportion of the person to the snow mountain background, the context of the context, etc.] _

Comment: The hint is my own and the replacement effect is a more natural face-to-face reminder. You have better comments。

Process III: Proximity/Personal Face

The last step is to generate a video link of the picture, which is simple, because it's a single mirror。

The model I've chosen is also Feedance 1.5pro, which compares the overall effect and value for money. The video is long enough to generate 4-5 seconds。

Two hints were shared:

[Atttention mirrors, follow the hostess on the dragon, keep the image unchanged, only the background shifts, the face is strong]

[The blizzard blows people's hair, clothes, flashes behind their backs, slow-propulsion mirrors, the nervous and tenacious look of the hostess, and the sense of oppression of seeing a huge, unrealistic giant]

Here are a few points to note:

1. Replaced images to the extent possible to select a single mirror that can be achieved, otherwise other video models may produce less effect than the original video

2. There will be some deviations in parameters such as the tone generated by individual models, requiring minor adjustments in later clips

3. The following images are not recommended for replacement (reducing unnecessary draw cards):

A very short spectroscopy

Big scene, vision

There's a lot of movement, like a dragon ride, a high-speed flight。

Finally, each video is imported into the editing software for a simple later processing, and one video is finished。

The process is a more efficient completion of a piece in conjunction with Feedance 2.0, and the human face of the next step does not have to be audited or queued. At the same time, it can also be used as a way to save an AI waste that is not available because individual images are imperfect。