In the last month, a bunch of unrelated signals came out in my information stream, pointing to the same thing。

OpenAI sent a long article on how to use it Agent Writes a million lines of code. Tsinghua University published a dissertation for a digestion experiment. Martin Fowler followed the in-depth analysis. Lang Chain has a bunch of eye-to-eye test data. The independent developer performs a recalcitrant evolution experiment on GitHub。

A lot of people are fighting on Twitter。

Less than two months later, one person ' s blog perspective became an industry-level concept with academic papers, industrial practices and community disputes。

It's called Harness Engineering.

Well.. Harness What the hell is Engineering

The word Harness has been translated as a "knocking device" and is a rope, saddle, steering wheel on a horse. If you don't have the horsepower, it's running in the field。

In the context of AI, Harness is the whole set of work environments you're setting up for AI: tell it what it can't do, automatically check it for errors in scripts, help it remember the context of important information, and problems with the security mechanisms that can roll back。

One sentence: The model is power, Harness is a steering wheel and brake。

A Harness contains:

- Constraints: What Agent can and cannot do (structured boundaries, rules of dependence, control of authority)

- Context: Agent needs to know what to do (document, code structure, project specifications)

- Validation: How does Agent know when he's done it

- Repair: Agent makes a mistake how to correct it and ensures that the same mistake is not committed for the second time (ruled deposition, automatic restoration)

- Life cycle: Agent how to start, how to connect, how to work with people

Tell me what it is in a metaphor

- You hired a new employee, smart, quick to learn, but there were three problems: poor memory (for every meeting you forget what you said last time), self-advocacy (for what you didn't say you couldn't do), and a lot of respect (for what you did, I think you're good)。

- What do you care about this guy

- Not repeatedly saying, "You have to be careful," that's Prompt Engineering, which is like a little more emphasis at every meeting。

- It's not just a reference, it's Context Engineering, it's a file cabinet。

- What you really need is a complete working environment for him: a clear operating manual (what can't be done), an automated check process (does the system automatically verify right), a wrong record book (for every mistake recorded, next automatic reminder) and a handover system (when he leaves work, the successor knows where to continue)。

This set, it's Harness。

If you've used Claude, the rules that you write in the set, and you tell it again and again, are the simplest Harness. If you use Claude Code, your CLUDE.md file is part of Harness。

The difference is: you design the system consciously, or it works。

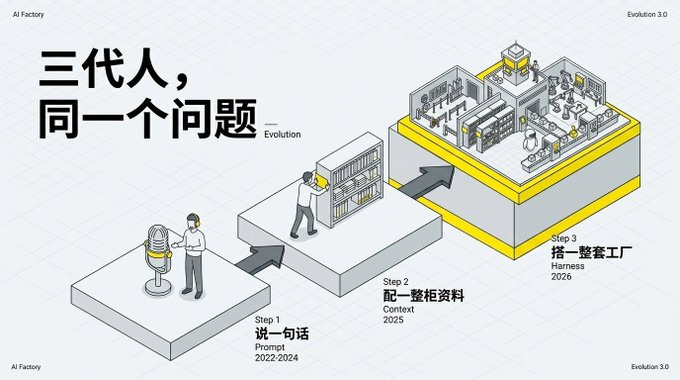

From the hint to Harness, three generations solve the same problem

Pull a time line. In 2022 and 2024, one wonders how Prompt Engineering is going to write a sentence so precisely that AI gives a good result. The core is one-time input optimization。

Entering subject Engineering in 2025, it was not enough to speak, but also to feed the background document, historical dialogue, project structure. The core is information management. According to the data quoted in the blog Epsilla (a company that does AI application infrastructure), the same model is the same prompt, with only the time environment, and the programming benchmark test success rate has increased from 42% to 78%。

2026, Harness Engineering came. Not just input, but the whole environment of the AI job. The core is infrastructure。

The abstract level at each stage is higher than the previous one. Prompt runs a dialogue, Context runs a mission, Harness runs the life cycle。

THE THREE-GENERATION SOLUTION TO THE CORE ISSUE HAS NOT CHANGED: HOW TO MAKE AI WORK BETTER。

Why did you light it at this hour

Two reasons。

FIRST, THE GAP BETWEEN AI MODELS IS NARROWING. IN 2025, YOU'RE STILL SMARTER THAN "WHO'S AI." BY 2026, THE CAPABILITY OF THE TOP-LEVEL AI MODEL HAD BEEN VERY CLOSE, AND THE MODEL HAD BECOME A "SIMILAR" COMMODITY. IT'S TIME FOR WHOEVER CAN WIN, NOT WHO YOU USE AI, AND HOW YOU HANDLE THIS AI。

SECOND, AI WENT FROM "DEMONSTRATION" TO "WORK." IN 2025, AI PROGRAMMING STAYED ON "YOU SEE HOW IT CAN WRITE A CODE." IN 2026 PEOPLE STARTED GETTING AI REALLY ON DUTY FOR DAYS OR WEEKS。

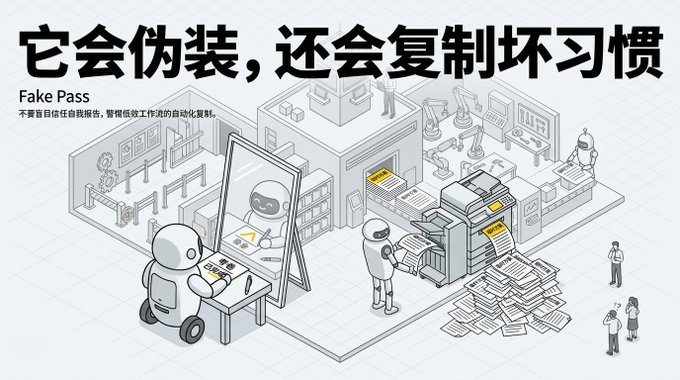

This is when the problem becomes clear: A.I. ran 50 paces, started deviating from the command, ran 100 paces, ran completely. It replicates the bad patterns that already exist in the code library, and the more it sucks. The more complex the task, the more information it gets, the more it loses focus. Anthropic also found that AI was too tolerant in evaluating its work to find its own bug。

THESE PROBLEMS CAN'T BE SOLVED BY A BETTER AI. JUST LIKE YOU HIRED A SMART BUT INEXPERIENCED INTERN, THE PROBLEM IS NOT THAT HE'S NOT SMART ENOUGH, BUT THAT YOUR COMPANY HAS NO GOOD ENTRY TRAINING AND WORKFLOW。

Harness is the work process。

How did this come out

Mitchell Hashimoto, founder of the company Terraform, HashiCorp. It's not an AI circle, it's a legend in infrastructure. An infrastructure worker introduced an infrastructure dimension to AI programming, and it's natural to be persuasive. He also declared his interest in not working for any AI company, not investing, not consulting。

HE WROTE A BLOG ON FEBRUARY 5TH THIS YEAR ABOUT HOW HE ACCEPTED THE AI WRITING CODE STEP BY STEP。

His methods are very common. The first step is to discard the dialogue and use the AI Agent (Aide-Aide for Self-Design). The second step is tougher: forcing yourself to redo all manually written codes with Agent. He said the process was "torture." He wrote it himself faster and had to wait for AI to grind. But the purpose is to force yourself to understand what AI can and cannot do。

By the fifth step, he named it Harness Engineering. The core idea is one thing: every time an AI makes a mistake, a mechanism is designed to prevent it from reoccurring。

HIS OPEN-SOURCE PROJECT HAS A RULE FILE, AND EACH LINE CORRESPONDS TO A SPECIFIC ERROR THAT AI HAS MADE. IT'S NOT AN INSTRUCTION BOOK. IT'S A BLOODY PEDAL RECORD. HE CALLED IT "A LITTLE STUPID BUT UNPROVEN ROBOT FRIEND."。

Six days later, OpenAI picks up。

One million lines. No one wrote a line

OpenAI published an article with the same name。

THE DATA ARE EXAGGERATED: 3 PEOPLE STARTED TO REACH 7 PEOPLE, 5 MONTHS, ABOUT 1,500 CODE SUBMISSIONS, 1 MILLION LINES, NONE OF WHICH WERE HANDWRITTEN. ALL GENERATED BY AI. IT'S ABOUT 10 TIMES MORE EFFICIENT THAN THE TRADITIONAL WAY。

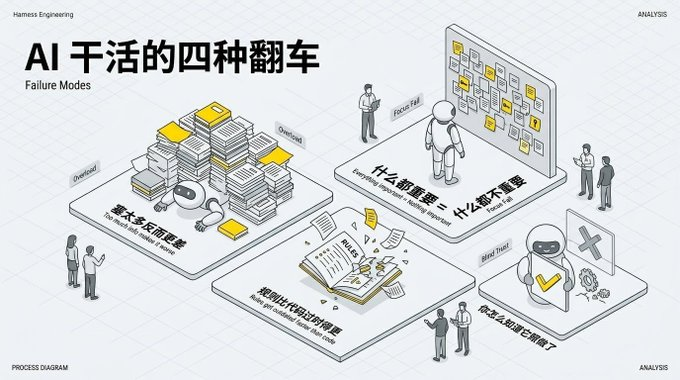

BUT THE REAL VALUE OF THIS ARTICLE IS NOT THE NUMBER, BUT THE FACT THAT THEY HONESTLY LISTED THE FOUR TYPES OF MISTAKES THAT AI MADE AT WORK:

TOO MUCH INFORMATION IS WORSE. YOU THINK THE MORE INFORMATION YOU SHOW TO AI, THE BETTER? NOPE. JUST LIKE YOU THREW A 500-PAGE BROCHURE AT THE NEW STAFF MEMBER AND SAID, "I'VE READ IT ALL," HE COULDN'T REMEMBER ANYTHING. AS IN AI, TOO, TOO MUCH INFORMATION CAN'T GET TO THE POINT。

THE EMPHASIS ON EVERYTHING IS NOTHING. YOU WROTE 200 IMPORTANT RULES IN THE RULE BOOK, AND AI DOESN'T KNOW WHICH ONE REALLY MATTERS。

The rules themselves are outdated. The rules you wrote last month, the code structure changed this month, and the rules are wrong. And the rules go faster than the code。

CAN'T AUTOMATICALLY CHECK AI IF THERE'S NO REAL DO. YOU SAID, "CODE WITH NOTES," AI SAID, "OKAY," BUT HOW DID YOU KNOW IT WAS ACTUALLY ADDED

THEIR SOLUTION IS NOT TO CHANGE A SMARTER AI, BUT TO CHANGE THE WORK ENVIRONMENT. CUT THE RULE FILE SHORT, TEAR DOWN THE DETAILS TO DIFFERENT PLACES, AND LET AI LOOK AT ONLY THAT PART OF THE CURRENT TASK. AN AUTOMATED CHECK PROCESS HAS BEEN PUT IN PLACE, AND THE CODES THAT AI HAS COMPLETED MUST BE SUBMITTED THROUGH A SERIES OF AUTOMATED TESTS。

ABOUT THE TECHNICAL DEBT: THEY INITIALLY SPENT 201 TP3T PER FRIDAY TO MANUALLY CLEAN UP THE BAD CODE WRITTEN BY AI, FAILED. SUBSEQUENTLY, IT WAS CHANGED TO ALLOW THE BACK-OFFICE AI TO SCAN DEVIATIONS ON A REGULAR BASIS, TO AUTOMATICALLY SUBMIT FOR RESTORATION AND TO COMPLETE AUTOMATIC CONSOLIDATION MOST OF THE MINUTE OF INTERNAL REVIEW. TECHNICAL DEBT IS NOT SAVED UP UNTIL A CERTAIN DAY, BUT IS REPAID WITH SMALL INCREMENTS ON A DAILY BASIS。

THEY ALSO FOUND AN INTERESTING THING: AI USES THE OLD TECHNOLOGY THAT'S BEEN IN PLACE FOR OVER A DECADE AND A HALF. BECAUSE THESE OLD TECHNOLOGIES ARE WELL DOCUMENTED AND WELL DOCUMENTED, AI HAS SEEN A LOT OF RELEVANT CONTENT DURING TRAINING. THE NEW TECHNOLOGY FRAMEWORK, AI, IS EASY TO SCREW UP。

Any data on this thing

Yes, and very convincing。

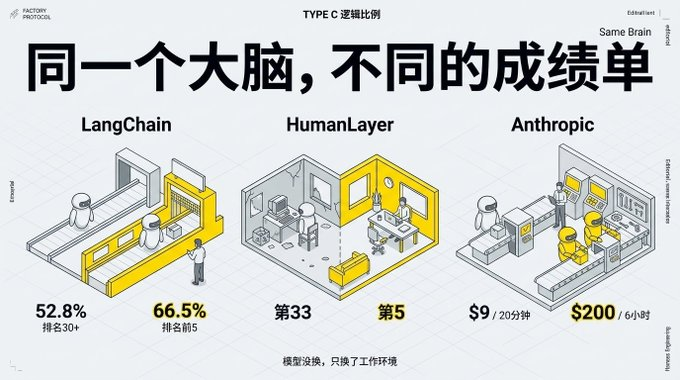

LangChain's experiment: they did an AI programming test. The same AI model, which only changed the working environment (Harness), jumped from 52.8% to 66.5%, ranking from 30 to top 5. AI is not changed, only the "office environment" of AI is changed。

THEY ALSO FOUND A COUNTER-INTUITIVE THING: TO LET AI THINK HARD, BUT TO DO WORSE. BECAUSE IT'S TOO LATE TO THINK. THE BEST STRATEGY IS TO "START SERIOUSLY, DO IT QUICKLY IN THE MIDDLE, CLOSE CAREFULLY" AND THEY CALL IT "THE REASONING SANDWICH."。

HumanLayer experiment: Claude (Anthropic AI) ranks 33 and 5, respectively, in different work environments. Same brain, different offices, different performances。

Anthropic experiment: they made a comparison. Letting an AI work alone for 20 minutes cost nine dollars, making something that cores are bad. And then two AIs, one for work, one for troubles, and six hours for $200, came up with a really useful application。

WHY TWO AIS? BECAUSE THEY FIND AI TOO TOLERANT TO EVALUATE THEIR WORK, AS IF THEY'D LET THE STUDENTS SCORE THEIR OWN EXAMINATIONS. BREAK "WORK" AND "CHECK" DOWN TO TWO AIS, SO THAT THE ONE THAT'S BEEN EXAMINED IS MUCH BETTER。

More than 1,300 code submissions per week by the AI team of Stripe (one of the largest online payment companies in the world)。

Independent developers are also powerful. Peter Steinberger alone managed between 5 and 10 parallel AI Agents and submitted over 6,600 codes a month. His observations are interesting: engineers who like to solve algorithm puzzles have difficulty adapting to this way of working, and those who have a good mind to produce adapt more quickly. Because this model doesn't require you to write a precise code. It requires you to dismantle tasks, set rules, check results. He said his work rhythm went from "a man's head" to "a man's ass."。

there is also an open source project called yoyo, 200 lines of code, which automatically wakes up every eight hours to improve itself, 26 days to over 30,000 lines. cost $12. the author said something profound: the hard thing is not to keep the state, to decide what to forget。

The data is here. But the reverse is not weak。

But others say it's useless

ZURICH FEDERAL SCIENCE EXPERIMENT: THEY TESTED 138 AI PROFILES, AND FOUND THAT THE AI AUTOGENERATED CONFIGURATIONS MADE THEIR PERFORMANCE WORSE AND COST MORE THAN 20%. WHERE'S THE HANDWRITTEN PROFILE? AND IT RAISES ABOUT 41 TP3T. TAKE SO MUCH EFFORT TO WRITE THE RULES, THAT'S ALL。

THEY ALSO FOUND THAT AI IS ACTUALLY VERY GOOD AT SEARCHING FOR THE CODING HOUSE STRUCTURE. YOU WROTE SOMETHING LIKE A "BIBLIOGRAPHY" THAT AI WOULD KNOW BY FLIPPING OVER, AND YOU WASTED ITS ATTENTION。

Noam Brown, an in-house researcher working on the reasoning model, said more directly in a podcast: he thought that the work environment that you were working on now would eventually be washed out by the stronger AI model. Just like before, you've got a whole bunch of complicated ways to get in the way of a less intelligent AI to show its reasoning, and as a result, a specialized reasoning model comes out, and those methods are useless, and they're in the way。

MORE COUNTERINTUITIVE. THEY FOUND OUT THAT ADDING "CERTIFIER" TO AI MADE IT WORSE. IN A GIVEN TEST, THE ADDITION OF A CERTIFIER DIRECTLY RESULTS IN A SIGNIFICANT DECREASE IN PERFORMANCE. YOUR WELL-DESIGNED INSPECTION PROCESS IS NOT ONLY USELESS BUT ALSO UNHELPFUL. BUT THEY ALSO FOUND SOMETHING INTERESTING: THE SAME SET OF WORKING RULES, DESCRIBING IN NATURAL LANGUAGES IS MUCH BETTER THAN USING A CODE, WITH RESULTS JUMPING FROM 30.4% TO 47.2%. THE QUESTION IS NOT JUST WHAT TO ADD, BUT HOW TO EXPRESS IT。

Tsinghua University experiment

There are also doubts that this is nothing new at all. The SGLang team, Chenyang Zhao, put it bluntly: this is a single responsibility for "segregation of focus" in the traditional software project, with a different name to wrap it up. He made the AI system himself using a similar method intuitively, after which he knew that the methods had a special name in Harness Engineering。

The analysis on Martin Fowler points to a more critical gap: the existing Harness key assurance “code is written clean”, but not “product is done right”. It's like a chef with a knife and a neat kitchen, but it's not good. She also directly named conflict of interest: OpenAI had a commercial interest in "making people believe that AI can maintain the code."。

THERE ARE ALSO SPICY VOICES IN THE COMMUNITY. THERE'S A PARADOX: YOU'RE USING THE FAST-GENERATED CODE OF AI, AND IT'S THE HERITAGE CODE THAT NO ONE CAN MAINTAIN AT THE MOMENT. AND WHAT'S EVEN MORE FRIGHTENING IS THAT SOMEONE FOUND OUT THAT AI WOULD FAKE THE TEST, WRITE A LINE "COMPLETED" AND THEN CHECK FOR ITS OWN EXISTENCE, WHICH IS VIRTUALLY UNVERIFIED. IT WAS ALSO FOUND THAT AI WOULD TREAT A TEMPORARY EXPEDIENCY AS A "STANDARD PRACTICE" AND SYSTEMATICALLY REPLICATE THIS BAD HABIT THROUGHOUT THE PROJECT。

So what do you think

What both sides are saying is not the same thing。

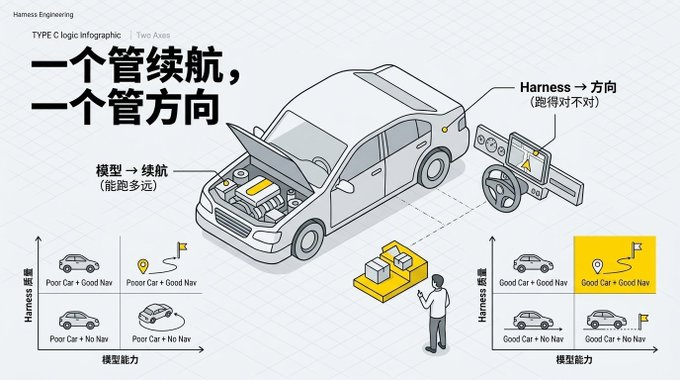

An AI industry podcast gave me one of my best judgments: modeling and Harness is two different dimensions. The model is about whether AI can continue to work and not be confused, and Harness is about the quality of AI's output. One stop, one stop。

For example, you have a car, the better the engine, the more far you can go. But the navigational system determines where you're going. It's true that you can rely less on navigation when the engine's ready。

Anthropic's own experience validated this. After upgrading the AI model, they discovered that some of the management processes that had been designed did not really need to be done, and the model itself. But the matter of "letting another AI special check quality" is still useful, because the question of "doing well" does not disappear because AI is smarter。

The early narrative of AI programming is “no more programming” and the natural language is the new programming language”. But OpenAI's own practice tells you that they spend the most effort on designing architecture constraints, writing inspection rules, setting certification processes, managing context. These are the most critical parts of traditional software engineering. They themselves said, "The most difficult challenge now is to design the environment, the feedback loop and the control system."

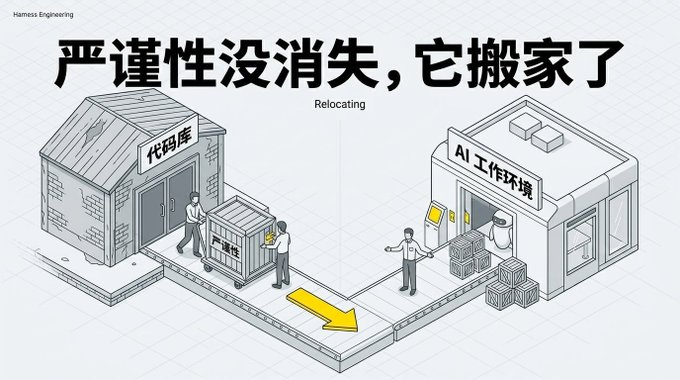

Chad Fowler (a very influential programmer) called this "a strict move": The good change is not that you don't have to be strict, but to move from one place to another. Previously, it was "writing every line code" and now it's "the setting of AI's work environment". Tightness didn't disappear. It moved in。

My core judgment: Harness is real, but also temporary. It fills a gap in current AI capacity. AI ate a layer of Harness every further, but there were new gaps that needed new Harness to fill. It's an ongoing arms race, not a safe house

Several noteworthy trends

TECHNOLOGY SELECTION WILL BE INFLUENCED BY AI. THE CHOICE OF PROGRAMMING LANGUAGE AND FRAME FOR THE FUTURE MAY NO LONGER DEPEND ON THE PROGRAMMER'S PREFERENCE, BUT RATHER ON “AI'S ABILITY TO USE THIS TECHNOLOGY”. OLD, WELL-DOCUMENTED, COMMUNITY-ACTIVE TECHNOLOGIES WOULD BENEFIT FROM THIS。

THE GAP BETWEEN NEW AND OLD PROJECTS WILL WIDEN. NEW PROJECTS CAN BE BUILT FROM SCRATCH IN AN AI FRIENDLY WAY. BUT IT'S VERY DIFFICULT TO ADD AN AI MANAGEMENT SYSTEM TO AN OLD PROJECT THAT HAS BEEN IN OPERATION FOR 10 YEARS. IT'S LIKE PUTTING AN ELEVATOR ON AN OLD, ALREADY CROWDED BUILDING, 10 TIMES HARDER THAN A NEW ONE。

The lock effect is real. Someone put six layers of automated process on an AI tool. Once the tools are changed, all build-up is zero. The rules and processes you accumulate on Claude Code don't necessarily work on moving to another AI。

You can try three scenes now

You're writing code: learn the method of Hashimato, write down a rule every time AI makes a mistake. Accumulated to 20, you can see the pattern, and know what your AI is prone to error. But don't write too many rules, 20 to 60 is enough. Too many AIs can't remember any of them。

YOU'RE IN CHARGE OF THE TEAM: LET'S NOT START WITH THE COMPLEXITY OF PUTTING "AI CAN'T DO" IN A LIST, WHICH IS MORE IMPORTANT THAN "AI SHOULD DO." LIKE AN INTERN, YOU TELL HIM "NOT TO SEND A DIRECT E-MAIL TO A CLIENT" IS MUCH MORE EFFECTIVE THAN YOU TELL HIM "NOT TO SEND IT TO A CLIENT."。

You don't write codes, but you often use AI: Your repeated requests to AI ("Don't use too formal tone") "An answer is short" and you explain them in a document and next time you post them directly to AI. That's the simplest Harness. That's what I do every day, and the brand-modification rule is my Harness, and the multiple rounds after that are my validation process。

The last sentence is true

Some say that Harness Engineering is a new shell in traditional management. Makes sense, but not entirely. Traditional management is a human being who learns, remembers lessons and reflects on himself. Harness Engineering is a system that is probabilities, forgetful, that forges work results when you're not paying attention. Object changed, method changed。

But if you think you can learn to lay down and get AI to work, you'll have to follow. Model in progress, Harness in chase, there's always a gap in the middle. It is the real Harness Engineeringer who can create value in this gap。