- ABSTRACT: ZERO FOUNDATION CAN ALSO BE USED, AND AN ARTICLE TAKES YOU FROM SCRIPT TO FILM, THROUGH THE FULL PRODUCTION OF THE AI SHORT VIDEO。

By Changan I Biteye

THE CONTENT TEAM, A PERSON WHO'S NEVER CUT A VIDEO, CAN YOU MAKE AN AI SHORT VIDEO WITH A STORY, A LINE, A LENS SWITCH? YES, AND THE WHOLE PROCESS DOES NOT LAST HALF A DAY。

This article teaches you to think about a story that opens up the mirrors and creates a video that cuts into a film。

YOU DON'T NEED ANY BASIS, DO IT AGAIN, AND YOU'LL GET A FULL AI SHORT VIDEO。

I. FROM THOUGHT TO STORY: AI VIDEO IS NOT GENERATED BY A HINT

THE FIRST STEP OF A LOT OF PEOPLE DOING THE AI VIDEO IS TO OPEN UP THE DREAM, AND GO TO THE INPUT FRAME AND WONDER WHAT TO WRITE. A FEW WORDS, SOMETHING THAT WAS CREATED WAS FAR FROM WHAT WAS IMAGINED, AND THEN BEGAN TO WONDER IF THE TOOLS WERE NOT WORKING, OR WHETHER THEY WOULD NOT WRITE THEIR OWN HINTS。

It's an idea, not a story。

The idea is one direction, it tells you what to do. Story is a structure that tells you what to do with every picture. There is a piece of work to be done in the middle, from thought to story, which is a script-mapping exercise。

THE SIMPLEST WAY IS TO OPEN ANY LLM AND TELL IT THE BLURRY IDEA IN YOUR HEAD AND LET IT HELP YOU WITH THE STORY. YOU DON'T HAVE TO FIGURE OUT ALL THE DETAILS, YOU JUST HAVE TO PROVIDE A DIRECTION, AND THE REST CAN WORK WITH IT。

When the storyline is established, do not take the lens directly, and cut it into several large paragraphs at the narrative pace, each of which clarifies what is at the core. This step is intended to control the overall rhythm and prevent a certain paragraph from being too slow or hasty。

that is, the dream single video has a maximum of 15 seconds, and under 12 seconds is the most stable in practice, with the lowest probability of a problem. a 1-min-second piece, calculated on an average of 10 seconds per segment, would take approximately 5 clips。

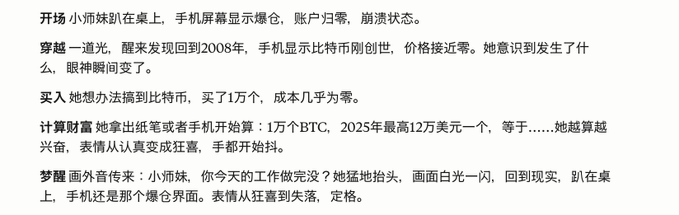

We cut the story into five paragraphs:

Paragraph I: Opening of the session, with the central task of presenting scenarios and roles。

Paragraph two: Through, the core task is to submit time lines。

Paragraph three: Showing a shift in role from confusion to soberness。

Paragraph 4: Counting wealth, pushing emotions to orgasm。

Paragraph V: Inverted and closed with the opening。

When the paragraph is identified, each paragraph is further broken down into a specific lens description. Each shot has four elements: the subject, the location, what is being done and the angle of the photograph. Don't write motion in the specter, just a static moment。

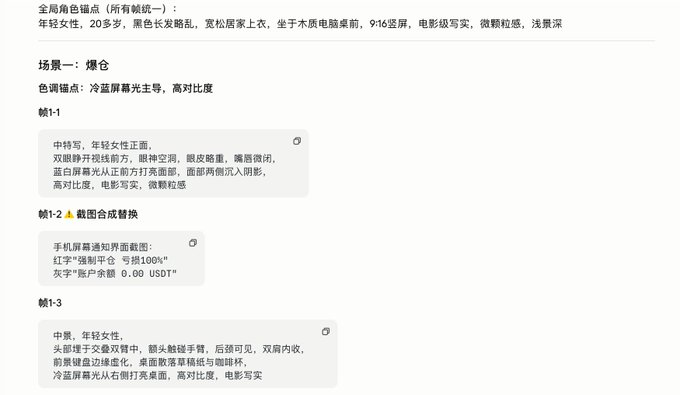

COPY THE SCRIPT OF PARAGRAPH 1 TO THE AI CHAT BOX AND ENTER "CREATE A SPECTROSCOPY DESCRIPTION BASED ON THE SCRIPT OF SCENE I" WITH THE FOLLOWING EFFECT:

II. From story to picture: locking roles, scenes and lenses first

This chapter is the core chapter of the whole process, where you generate the quality of your pictures, which directly determines the quality of the final video

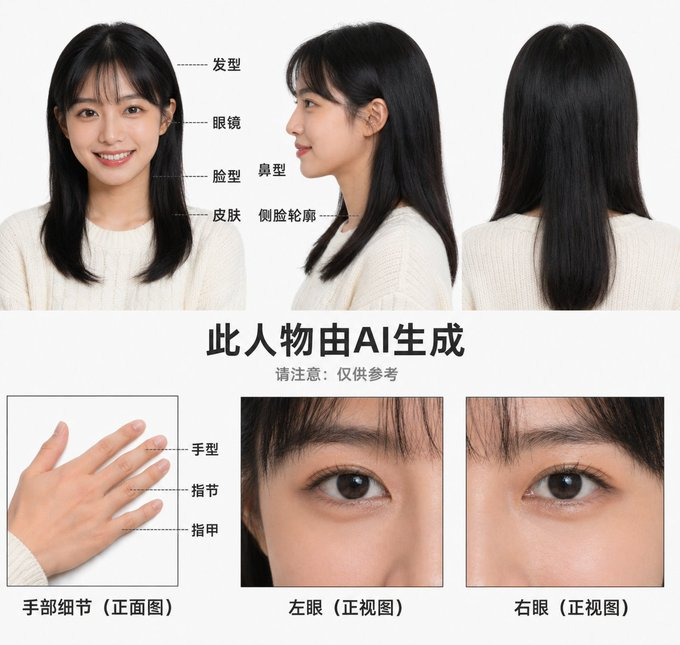

Make a three-view first. Lock your lead

Before creating any spectroscopy, the first thing to do is make a three-view of the main character。

The three-views are three maps of the front, side and back of the same character, so that the person's appearance can be fixed, and whatever the scene, the three images are used to keep the role consistent。

If you skip this step and generate a spectrograph, you'll find that every time you create a character that looks different, your hair changes, your face changes, you can't do it anymore。

Open ChatGPT/Seedream and enter in the dialogue box:

"Create me a third view of the little Biteye

AI WILL GENERATE A PICTURE OF THE SAME PERSON WITH THREE ANGLES, AND IF THE PERSON THAT'S GENERATED IS SO DIFFERENT FROM WHAT YOU WANT, YOU CAN UPLOAD THE REFERENCE。

After three views are satisfactory, download this image and upload it back as a reference for every video generated later。

Then we'll do the scenery reference

After the role is defined, the same logic is to create a single reference for your scene, and the dialogue box enters "Prove me a picture of the office."

Before the official production of the spectrograph begins, a basic concept needs to be understood: the lens is the smallest expression of the video。

The camera also speaks, different lens views, different messages, and the common ones are:

- Panorama: The audience knows where this scene is and what its role is。

- Medium Vision: The most used scenery in the narrative is the one where you can see the action and the expression。

- Queue: The image is made of emotions, only in the face, hand, or a key prop, magnifying the details, and giving the audience a strong emotional shock。

Once a single shot is understood, a further layer is required: a video is not a shot, but the result of multiple lenses coming together in rhythm。

In actual production, we usually organize a video camera structure with four and nine. - In a video, four or nine shots to complete a full expression。

The choice of four and nine is essentially control of the rhythm:

- The slow-paced paragraphs: for example, opening the scene, closing the mood, using a four-gauge, and four lenses with enough room to breathe every image。

- Quick-paced paragraphs: For example, the climax of a fight, the camera has to be intensively switched to create a sense of tension, at a time when it's like a nine-gauge, nine-gauge in a video, and it's completely different。

When you understand the camera and the rhythm, you can begin to actually make it: turn abstract stories into concrete images。

AFTER THE CHARACTER THREE VIEW AND THE SCENARIO REFERENCE MAP ARE READY, THE NEXT THING TO DO IS TO PUT THE SPECTROSCOPY IN FRONT OF IT, A SINGLE IMAGE INTO VISUALIZATION. FOR A SIMPLE REASON, AI IS BETTER AT DEALING WITH "SPECIFIED SINGLE FRAME" RATHER THAN "CONTINUOUS CHANGE PROCESS" AND CAN SIGNIFICANTLY REDUCE THE NUMBER OF CARDS。

This will be done by:

Each time you generate a shot, upload the role three view and the corresponding scene reference to the ChatGPT dialogue, and then enter the prompt for the creation of the new lens。

HELP ME GENERATE A FOUR-GAUGE SPECTROSCOPY BASED ON THE STORY STORY SPECULATION PLUS SPECTROSCOPE (ATTACHED WITH THE PREFACE AND AI-GENERATED SPECTROSCOPY) WITH A SCENE + CHARACTER MAP

The model will break the lens into four images based on the spectroscopy information you have provided, and ensure consistency between the person and the scene, as follows:

Tips, a few high-frequency pits in Vincent's chart, saves a lot of time in advance:

- IF YOU WANT TO CREATE A SHOT OF SOMEONE PLAYING WITH HIS CELL PHONE, THE CELL PHONE SCREEN WILL AUTOMATICALLY TURN TO THE AUDIENCE. THE LOGIC OF AI IS TO MAKE "CONTENT READABLE" AND PLAY GAMES A SOURCE OF POLLUTION FOR PICTURES. THE CORRECT APPROACH IS: "HANDS HOLD MOBILE PHONES HORIZONTALLY, SCREENS TOWARDS THE PERSON'S FACE AND MOBILE PHONES TOWARDS THE LENS."。

- PROFESSIONAL TERMS MAKE AI COME UP WITH A WHOLE SET OF SCENES: WRITE "NURSE," AI COMES UP WITH A HOSPITAL, WRITE "CHEF" AND AI COMES UP WITH A KITCHEN. THE RIGHT THING TO DO IS TO DESCRIBE ONLY THE CLOTHES YOU REALLY WANT AND NOT THE JOB NAME。

- It is only possible to generate static images, and the "turning" has no corresponding visual state. The correct approach is to describe only what exists within this frame。

III. From image to video: hints to write action, not rewrite images

The specs are ready, and now we're going to turn them into moving videos。

It's a dream

OPEN THE BROWSER TO SEARCH FOR "DREAM AI" AND ENTER THE OFFICIAL NETWORK. CLICK ON THE UPPER RIGHT CORNER TO LOG IN, REGISTER WITH A SHIVERING ACCOUNT NUMBER OR A MOBILE PHONE NUMBER AND HAVE DIRECT ACCESS WITHIN THE COUNTRY。

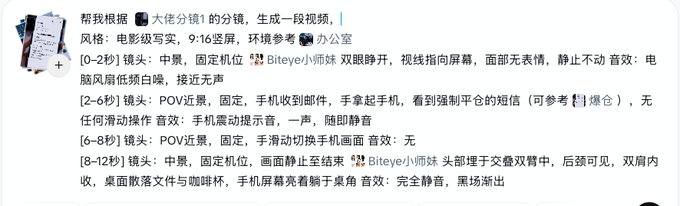

How do you spell that

This is the most critical part of this step and the easiest place for newers to write。

Throw all the reference diagrams in first, i.e. dream supports the simultaneous uploading of multiple reference diagrams, and just drag the picture into the chat box. All the material you've prepared in the last chapter, the role three view, the scene reference, the four or the nine-magic spectroscopy, is to be dragged into it at once, i.e. the dream is to synthesize the information of these pictures to generate the video。

There's a mistake for a lot of rookies here to restate what's on the picture. That's because I can see your upload. I don't need you to tell it what it is。

The hint is: what's moving in the picture, how is moving, whether the camera is moving or not, and what happens every time。

Follow the following template for a period of time in each line of video:

"Let's look at the specs above and generate a video。

[Initial to end seconds], [segment], [engine], [role or subject] + [specific action], sound: [sound description]. I'm sorry

Acoustic description is the easiest part to ignore by beginners, and if there is a line in the video, it is not enough to write "speak" and the model produces a random sound for reference. There are two ways to ensure that the role sounds are consistent in many videos:

1 ️⃣ for reference on the first segment

After the first video is produced, the sound of the video is exported separately. When each subsequent paragraph is generated, the audio is uploaded as a sound reference, i.e. the dream refers to this sound to generate the human voice of the follow-up clip to ensure that the sound is consistent。

2 x Fish Audio

Open Fish Audio, search for role-temporal sound and download a reference audio after an audition. This reference audio is used uniformly for each video generation, and the whole sound is consistent。

CONTROLS THE TONE OF THE AI VOICE WITH POINTS

WRITE LINES FOR THE AI VOICE MODEL, OR YOU'LL BE FINISHED WITH THE TEXT. IN THE SAME SENTENCE, THE POINTS ARE DIFFERENT AND THE TONE CAN BE COMPLETELY DIFFERENT。

The core logic is that the symbol stops and the mood for decision。

... the ellipsis breaks the sound but continues to air, suitable for a state of reflection, hesitation and unfinished speech。

...! Combining use is a sudden outbreak after depression。

() The volume of the contents in brackets is automatically reduced and becomes acoustic, suitable for inner monologue and self-expression。

* Content* The term asterisks will become lower, slower and heavier to highlight key messages。

[ ] Instructions in square brackets rather than lines, such as [deep breath], [one second pause], the model will execute the action rather than read out。

Tips:

- AI DOES NOT HAVE A SENSE OF LOCATION, IS OFTEN CONFUSED AND NEEDS TO DO A SEPARATE "LOCATION-RELATIONSHIP REFERENCE" TO TELL THE AI FIGURE HOW TO MOVE, AS SHOWN IN FIGURE I BELOW. THERE IS ALSO A SIMPLE WAY TO USE ARROWS TO DESCRIBE THE MOVEMENT TRAJECTORY OF THE PERSON AND TO ADD THE WORDS "DELETE THE ARROWS."。

- Write slow or slow. Modeling processes are much more stable than fast motion. Fast-paced clips are required, and priority is given to the speed of the clip, rather than to the model for quick action。

- Each video is uploaded with reference maps and not only once. The model does not have a cross-section of memory, it does not have that part of the reference map, and the role appearances change。

IV. From the clip to the clip: the final quality of the clip

The clippings and later stages are a step in the overall process, with each of the materials generated before them being independent, with a different tone, an incoherent rhythm and a dispersed sound, and the clippings being used to synthesize these fragments into a full story。

The video, along with the music, is more stimulating to viewers, with subtitles, with clearer lines, the same material, which is well and poorly cut, and eventually shows a difference of one measure。

The approach is four-step: set the material in a uniform tone with sound, subtitles, and finally export。

Step 1: Arrange the material

Opens the clip and drags all the clips to the time axis in the scene order. Let's leave the tone and sound, check the order, see if there's a problem with the rhythm, too long a clip to cut out the extra part at this point。

Step 2: Unified Hue

Snippets that are generated at different times may vary finely in color temperature and brightness and appear to be fragmented together. Approach: Select all the footage, add a filter to the whole of the "regulated" scene, with a cold blue tone and a warm yellow turn after the second scene, and keep each scene consistent。

Step 3: Add background music and sound

The white sound was processed when the video was generated, and this step was mainly complemented by two types of sound: background music and environmental sound effects。

BACKGROUND MUSIC DETERMINES THE OVERALL EMOTIONAL TONE, WITH THE VOLUME PRESSING BELOW THE WHITE-TO-WHITE 30%, AND DOES NOT OVERWHELM。

Step 4: Subtitles

Automatically identify white with a clipped "smart subtitle" and check the symmetrical text after that. Narrative or self-speak lines are suggested to be distinguished from normal white by different styles such as italics or different colours。

V. FROM TOOL TO EXPRESSION: AI VIDEO REALLY CHANGED WHAT

WE BELIEVE THAT THE THRESHOLD OF "VIDEO PRODUCTION" WAS LOWERED IN THE AI ERA, AND THAT EVERYONE CAN RULE OUT BIG HOLLYWOOD FILMS LATER。

But the low threshold doesn't mean you can make it. The tools are open and the curriculum is everywhere, but most people are stuck in the same place: never running through it in its entirety. This article Biteye has taken you from a vague idea to a complete piece。

In the past, this process required a set of professional divisions of labour: writing, spectroscopy, art, photography, editing, each of which was a threshold。

Now, these links are not gone, they're just compressed into a process。

This means a lower-level change: video is no longer a product of "production capacity" but a product of "expression."。