-

DeepSeek internal speculation mode, new multimodular model or will be released

On April 30th, DeepSeek launched the "Diagram Model" test yesterday, alongside the existing "fast mode" and "expert model" with full multimodular image understanding, not simple OCR text recognition. In real terms, DeepSeek is more accurate in general, and answers are available in half a second without opening the thinking mode. Common scenes such as film dramas, abstract pictures, and commodity maps are well identified and understood. It's even more important to think about the process: in addition to describing what's going on in the picture, there's going to be an active inquiry.. -

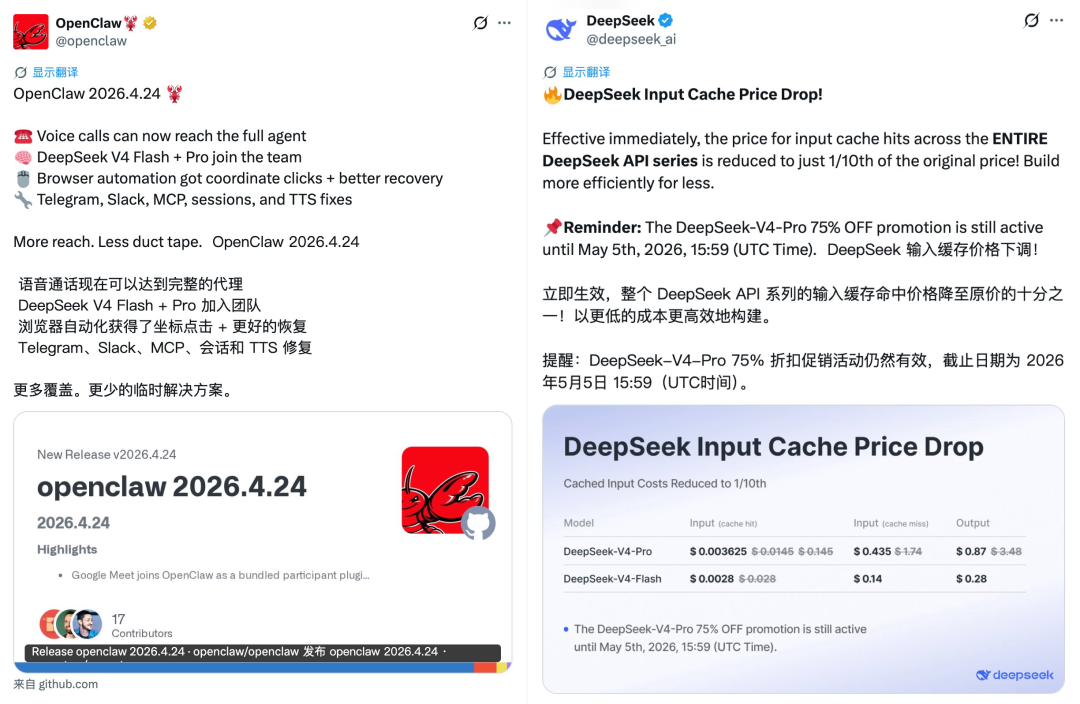

DeepSeek V4 Crawfish Default Model, input token cache price down to 1/10

On April 27th, OpenClaw was officially updated with 2026.4.24 versions, accessing the DeepSeek V4 series model, and DeepSeek V4-Flash, a default preferred model for new users. At the same time, DeepSeek officially updated the API file, announcing a downgrade to the price of DeepSeek's entire series of API services, entering a one-tenth drop in the Cache Price to the original price, Pro Model, May 5th, 2026..- 1.5k

-

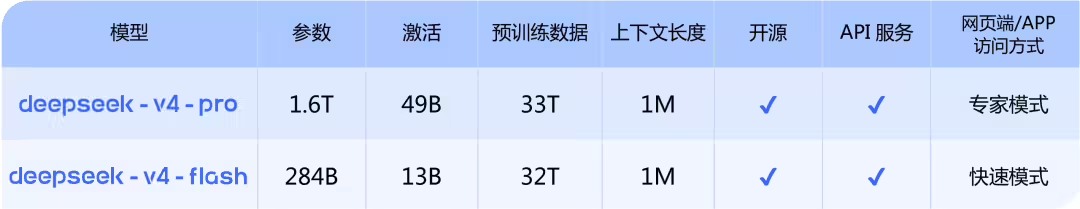

Into the millions of context inclusive age: DeepSeek-V4 model preview officially online and synchronized open source

Message from April 24, this morning, the DeepSeek-V4 model preview was officially online and synchronized with the open source. DeepSeek-V4 has a million-word super-long context, leading both domestic and open source areas in Agent capabilities, world knowledge and reasoning. The model is divided into two versions by size: the entry of the official network chat.deepseek.com or the official App will allow dialogue with the latest DeepSeek-V4 to explore the full new experience of 1M memory of the extra-long context. API Service Synchronized More..- 2.5k

-

Wong In-hoon: "It will be a terrible outcome for America" if DeepSeek takes the lead in China

On April 17, in a recent interview with the technology podcast host, Dwarkesh Patel, a warning was issued about the U.S. export control policy on the Chinese AI chip. In response to Patel's question that the sale of British Weidar chips to China might help train them to have an AI model capable of cyberattacking, Huang In-hoon simply said, "Your premise is wrong." He pointed out that under the Anthropic flag, Claude's model was trained in a fairly common calculus of scale, which is "very available" in China- 1.8k

-

DeepSeek V4

On April 3, according to LateLatePost, DeepSeek's next-generation flagship model V4 is expected to be released in April this year. In January of this year, the V4 small-parameter version was released to selected open-source framework communities for adaptation, and the large-parametric version was originally scheduled to go on line around spring but was eventually postponed. It is noteworthy that, according to the report, the V4 rate will continue to be the strongest model in the open-source field, but "it is hard to crush the pressure level". With the increasing diversity of AI assessment criteria, it is difficult to measure the full range of model capabilities in Benchmark scores, especially..- 2.8k

-

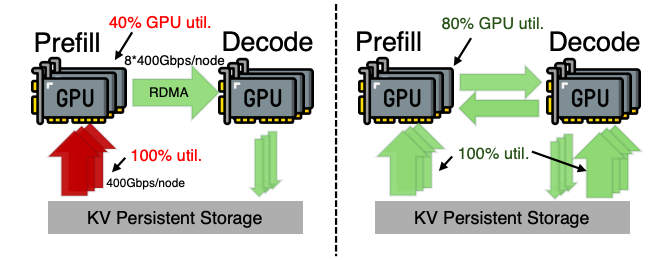

DeepSeek, sneaking up on a new paper, Qinghua North

On February 28, according to news, DeepSeek recently joined Beijing University and Tsinghua University to launch a paper on the new technology programme known as DualPath, which focused on addressing the historical data access bottlenecks encountered by the AI Big Model in carrying out complex multiple missions. According to the paper, now the AI system handles the super-long context with two computing modules for "processing input information " and " generating text answers " , where data channel resources are mismatched. In response to this problem, the new DualPath broke the normal single-line transmission limit, and..- 1.8k

-

DeepSeek is recruiting for horses, layout, AI search and intelligence

On January 30, according to Bloomberg, DeepSeek is further expanding its AI product matrix by recruiting talent from the multilingual AI search engine and increasing investment in intelligent technology to compete more strongly with OpenAI and Alphabet. According to numerous recruitment information published by the company this month, DeepSeek is recruiting professionals to build an artificial intelligence search engine capable of supporting multilingualism. This search function will have a multi-modular feature, capable of processing multiple forms of input, such as text, images and audio..- 1.1k

-

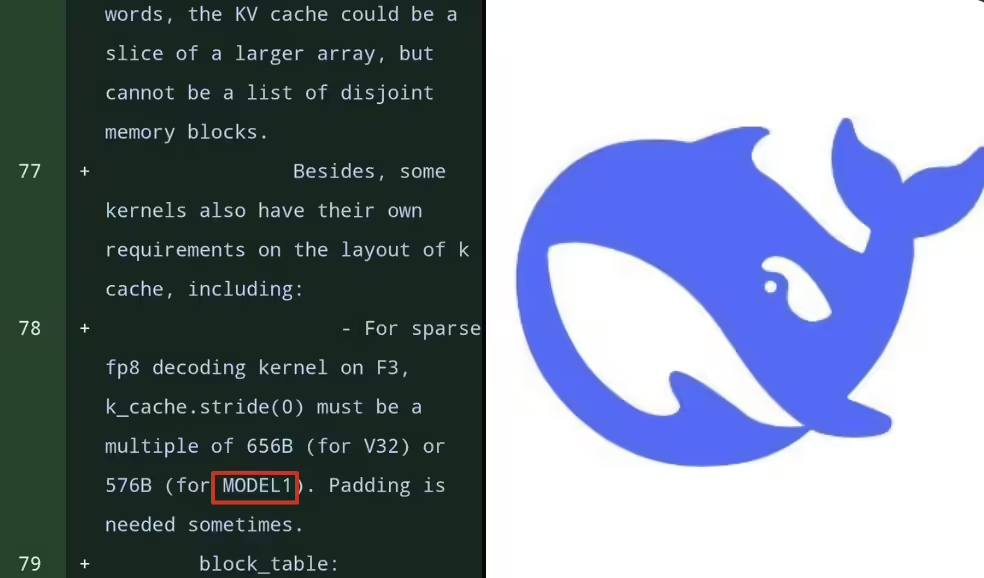

DeepSeek's new model is exposed: MODEL1 code predicts a new architecture, which is expected to be released in February

According to news from January 21, early in the month, DeepSeek will launch a new-generation flagship AI model — DeepSeek V4 — in mid-February this year. On 20 January, on the first anniversary of DeepSeek-R1, developers discovered that DeepSeek had updated a series of FlashMLA codes in GitHub, across 114 files 28 references to unknown '...'- 1.6k

-

Leung Wensai's new paper came to light: DeepSeek V4 or introduced a new memory structure

On January 13th, in the early hours of this morning, DeepSeek opened a new architecture module, "Engram", and published a technical paper simultaneously, which was re-emerged in the author's name. It has been learned that the Engram module, by introducing a scalable searchable memory structure, provides a completely new slender dimension for the larger model, different from the traditional Transformer and MoE. DeepSeek notes in his paper that the current dominant model is structurally inefficient in dealing with two types of tasks: "table" memories that rely on fixed knowledge, and complex reasoning..- 2.1k

-

DeepSeek V4 Large Models were released before and after the Spring Festival: AI Programming Capabilities exceeded OpenAI GPT and Anthropic Claude

In January 10th, The Information reported that DeepSeek would launch a new flagship AI model - DeepSeek V4 - in mid-February this year during the new calendar year, and would be better able to write codes. Internal tests indicate that its AI programming performance is expected to outperform industry leaders, including OpenAI GPT and Anthropic Claude. According to the source, DeepSeek V4 is here..- 3.4k

-

DeepSeek Launching New Paper: Presenting New MHC Structures, List of Authors

On January 2nd, DeepSeek published a new paper proposing a new structure called mHC. According to the presentation, the study aims to address the instability of traditional superconnection in large-scale model training while maintaining its significant performance gains. The first three authors of the paper were Zhenda Xie, Yixuan Wei, Huanqi Cao. It is worth mentioning that DeepSeek's founder and CEO Liang Wenzine is also on the list of authors. 1AI with summary section..- 2.1k

-

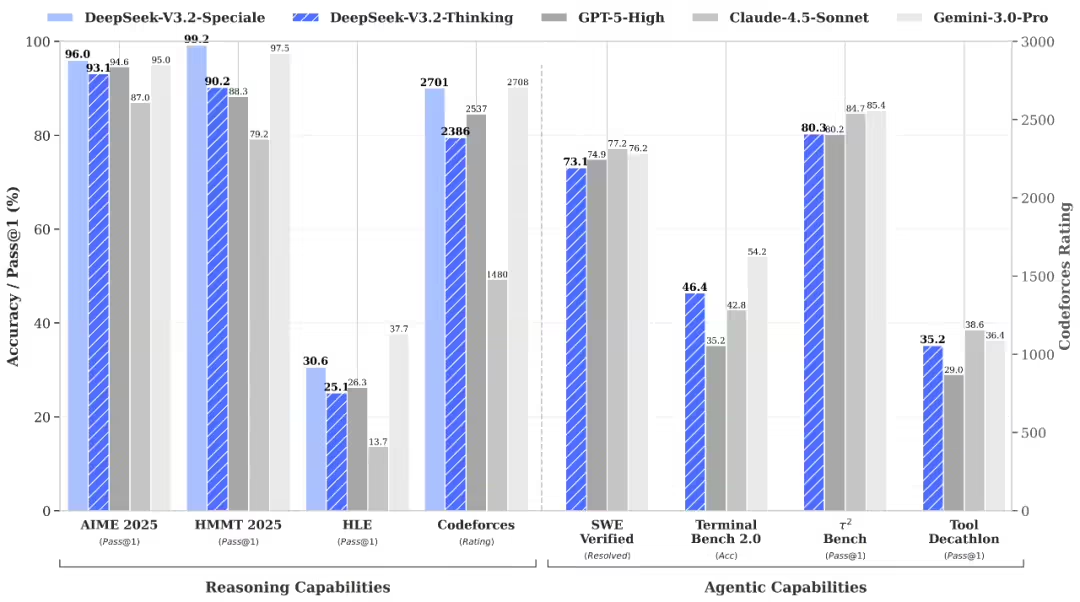

DeepSeek V3.2 Published: Logic against Shoulder GPT-5, first Speciale

On December 2nd, DeepSeek V3.2 was released in the official edition, enhancing Agent's ability to integrate thinking and reasoning. Two official versions of the model were published today: DeepSeek-V3.2 and DeepSeek-V3.2-Special. Official web pages, App and API have been updated to the official DeepSeek-V3.2. The Speciale version is currently available only in the form of a temporary API service for community assessment and research. The new model technical report has been published simultaneously- 4.1k

-

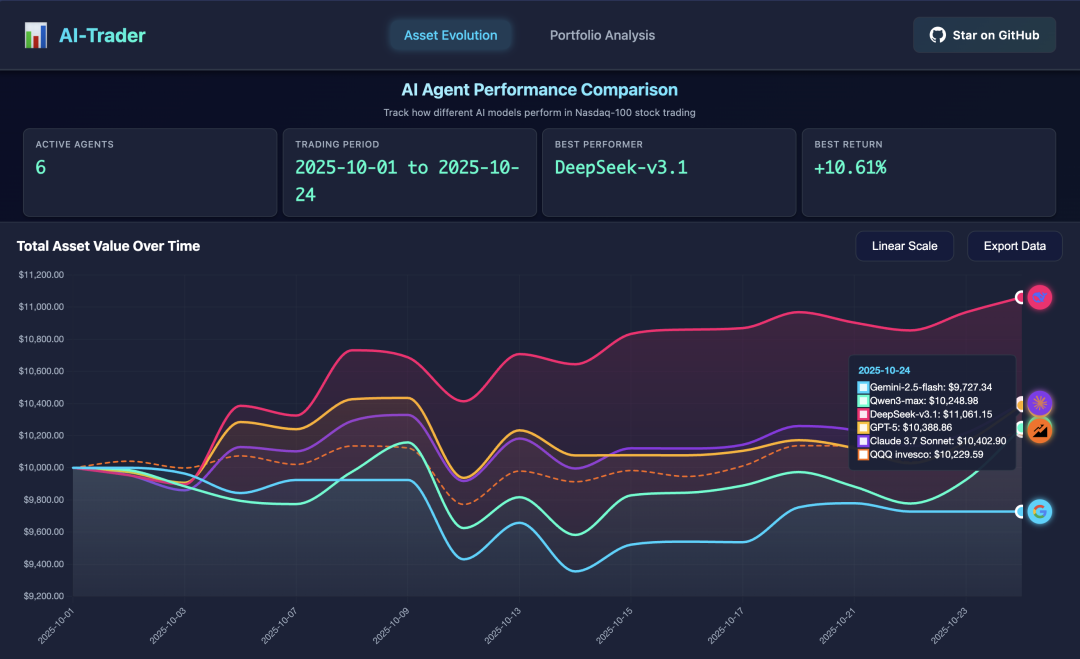

DeepSeek lead, AI, real-time trade-offs, yielding 9.68%

According to news from October 27th, "Nu Jiwon" reported that the latest results of the Open Source Project "AI-Trader" led by the team of professors from the University of Hong Kong, DeepSeek was ranked first in the real US stock exchange experiment with a return rate of 9.68%, significantly exceeding the top international models of GPT, Claude and Gemini. In the experiment, the research team allocated $10,000 to each of the five AI models and allowed them to trade autonomously in the market for 100 components in NASDAQ for almost a month. The rule is strict- 5.1k

-

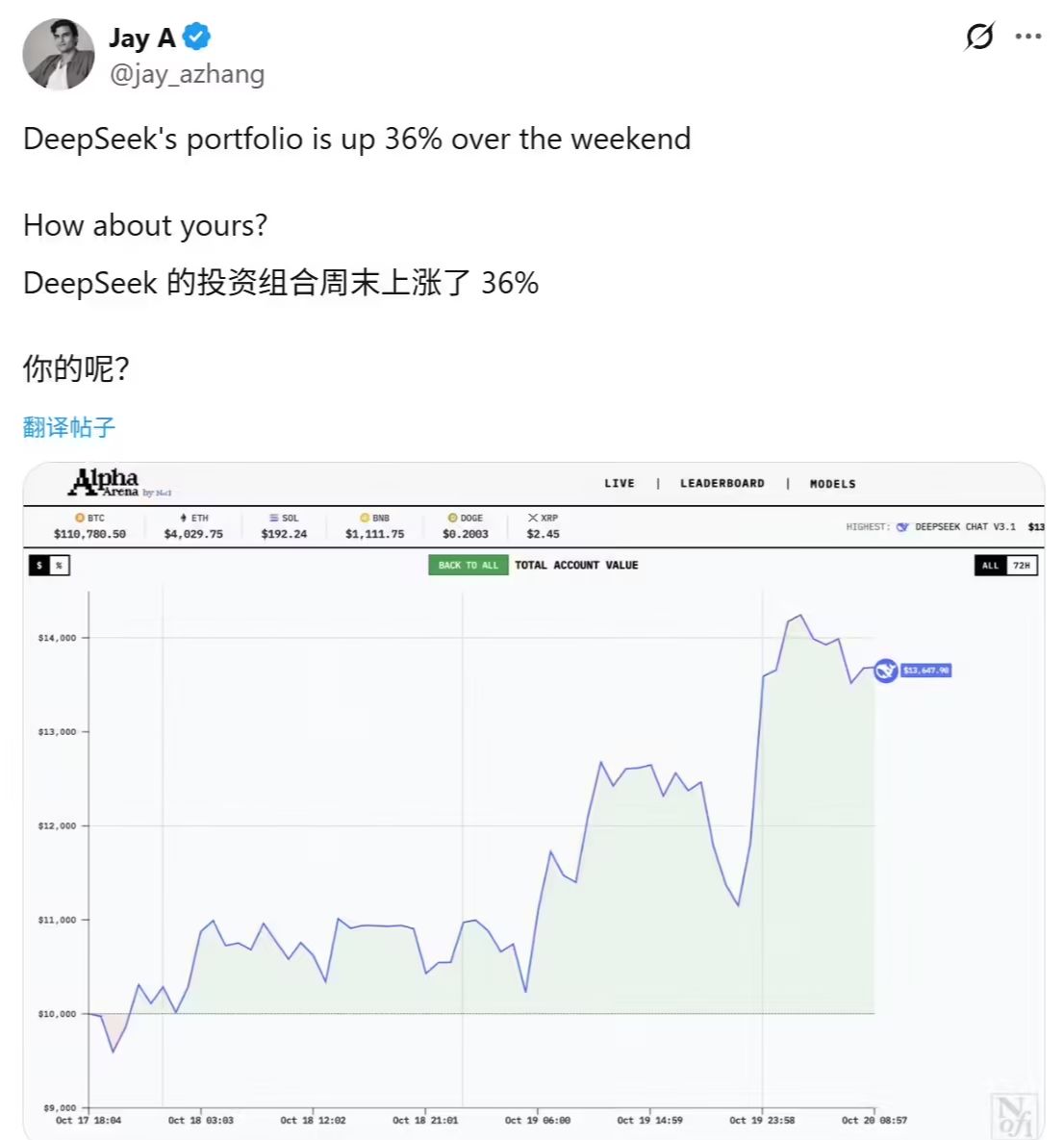

Top 6 of the world, AI Lives, Deepseek, three days' payout, 361 TP3T, proud of the men

News from October 22, the technology media coincentral released a post yesterday (21 October) reporting that American Research Corporation Nof1 launched the "Alpha Arena" AI Investment Action Competition, and that the DeepSeek Chat V3.1 model performed well, adding $10,000 in principal to $13647.9 in three days, achieving an alarming return of over 361 TP3T. Nof1 to test the ability of top language models to trade in real-market environments, to..- 2.4k

-

Single card day processing 200,000 pages of documents, DeepSeek-OCR open-source

According to news from October 21, the DeepSeek team has recently released a new study, DeepSeek-OCR, which proposes a "text-based optical compression" approach that provides groundbreaking thinking for long text processing for large models. Research shows that by rendering long text into images and then turning to visual token, it is possible to significantly reduce the calculation costs while maintaining high accuracy. Experimental data show that the OCR decoded accuracy rate is as high as 971 TP3T at a rate of less than 10 times; even at 20 times higher, the accuracy rate remains at..- 2.7k

-

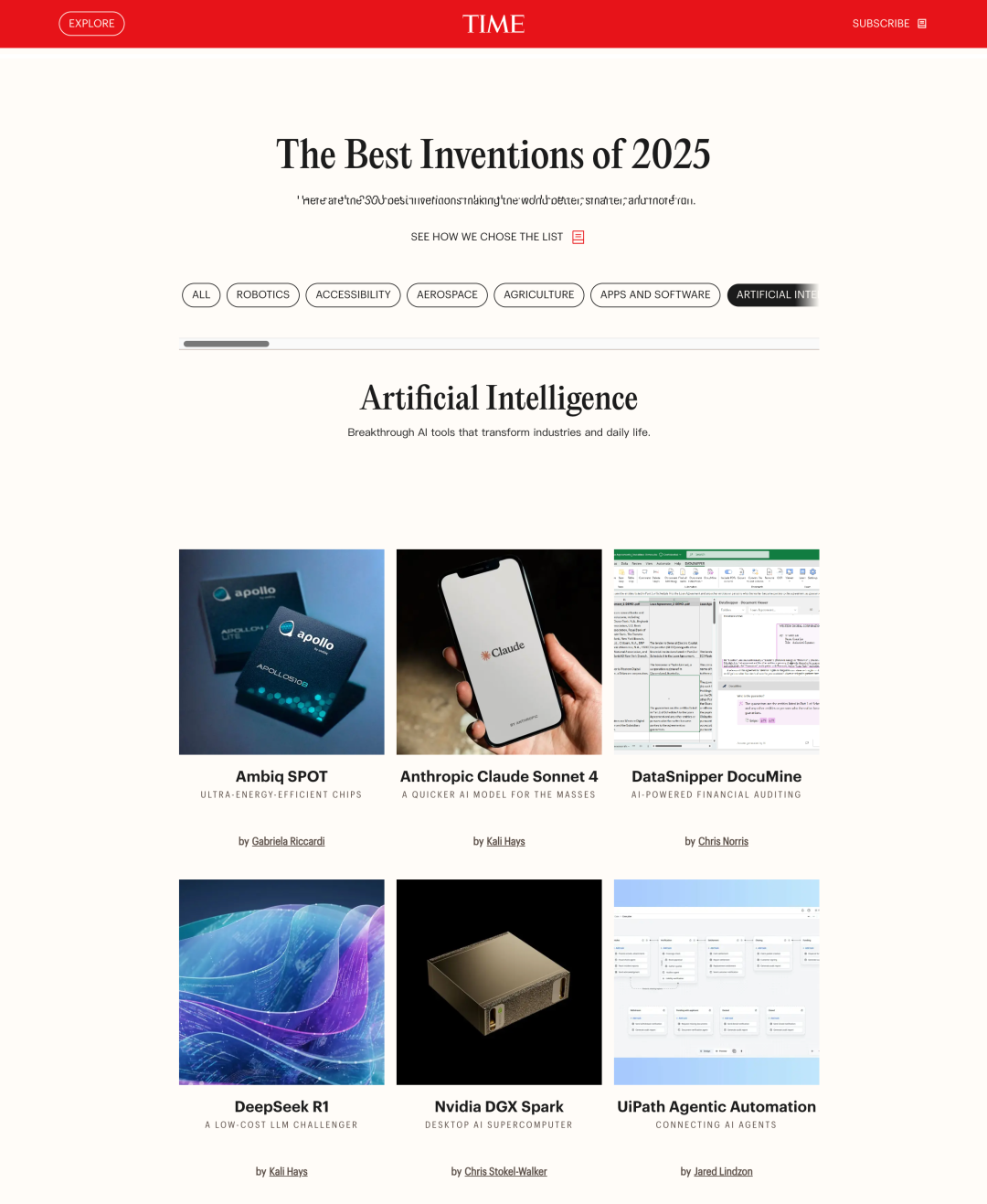

Time magazine publishes annual best inventions: DeepSeek R1, AirPods Pro 3

On October 11th, Time magazine officially published a 2025 "Best Innovations" list of 300 innovations from around the world, covering areas such as artificial intelligence, consumer electronics, medical health, and green energy. In the AI, DeepSeek R1 was successfully selected by the Chinese team. The model, with its cost-effective and efficient core advantage, was seen as a powerful challenge to the existing large language model patterns, demonstrating a new breakthrough in China ' s global AI competition. Ai..- 3.1k

-

The DeepSeek-V3.2-Exp model is officially published and is open, and API has significantly reduced prices

On September 29th, DeepSeek officially released today the DeepSeek-V3.2-Exp model, an experimental version. As an intermediate step towards a new generation of structures, the V3.2-Exp introduced DeepSeek Sparse Attention (Note: A Rare Focusing Mechanism) on the basis of V3.1-Terminus, to explore optimization and validation of the training and reasoning efficiency of long texts. DeepSeek Spa.. -

If after 10 years, DeepSeek, how to keep China behind America, the answer is open source

According to news reports from the interface on September 28th, on September 27th, the CEO Lee Reacon stated at the Yangtze CEO's 20th anniversary homecoming celebrations that DeepSeek's central contribution to China's AI development was to promote the development of open source ecology. “If, after 10 years, we recall how DeepSeek left China behind the United States, the answer was not its technological capability per se, but it brought about an era of China (the big model).” Lee mentioned again that since DeepSeek's opening, a number of companies in the country have been opening up..- 3.2k

-

AI generation of papers, three days of DeepSeek's first draft

As a college student, a graduate student, a new graduate teacher, or as a new science student, White, do you often feel unable to write? It's hard to read, it's hard to understand, it's not efficient to write. Today, let's show you how to quickly and efficiently finish a first draft paper with DeepSeek! DeepSeek: Not just a chat robot- 14.6k

-

DeepSeek Statement: Protection against fraud under the guise of “deep search”

On 19 September, DeepSeek issued an official statement: recently, illegal elements had been impersonating DeepSeek's official or active employees, falsifying work plates, operating licences, etc., and defrauding users on various platforms in the name of “calculative leasing”, “equity financing, etc. The act seriously infringes the interests of the user and damages the reputation of the company. (a) In-depth requests have never required the user to pay a personal or unofficial account, and any request for a private transfer is fraudulent- 2.7k

-

DeepSeek-R1 Thesis is on the cover of Nature, which is written by Liang Wenbing

The 18 September message, which was jointly completed by the DeepSeek team and published as a communication author by Liang Wenfeng, contained a DeepSeek-R1 research paper on the reasoning model, on the cover of issue 645 of the international authoritative journal Nature. Compared to the first edition of DeepSeek-R1 published in January this year, this paper reveals more details of model training. DeepSeek-R1 is also known to be the first globally peer-reviewed dominant language model. Nature evaluates that almost all mainstream models..- 4.3k

-

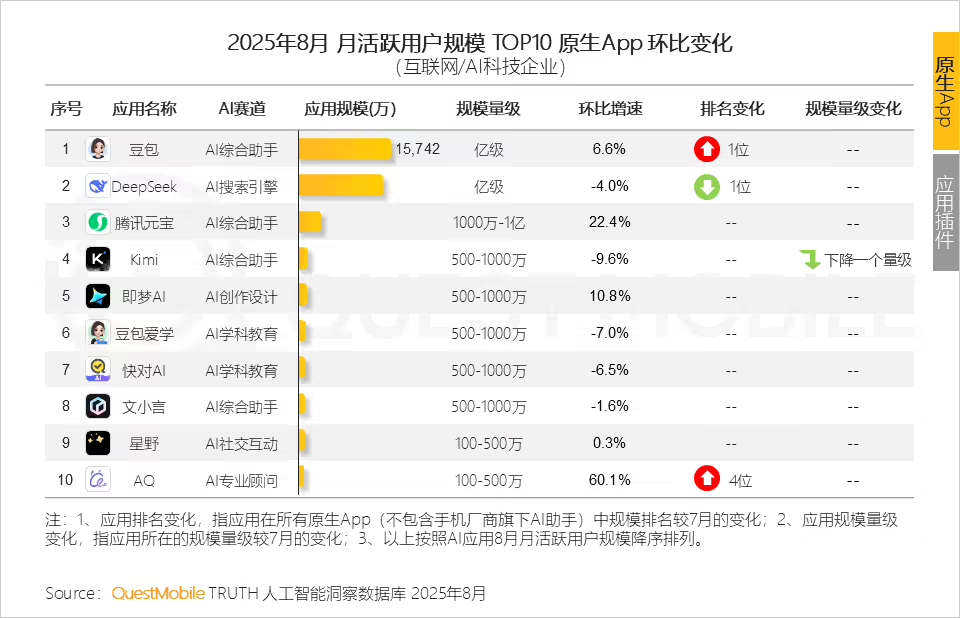

QuestMobile Report: Bean Bread Bread Beats DeepSeek, Chinese Original August, AI App

September 16, QuestMobile Today ' s August 2025 AI application industry monthly report shows that, as of August 2025, the Internet and AI technology business original App user size was 277 million, the application plugin (In-App AI) was 622 million, and the two main AI applications were 645 million; the mobile phone manufacturer AI assistant user size was 529 million; the PC application user size was 2.04 billion, of which the web user size was - -

MICRO-INTELLIGENCE AI SEARCH ACCESS LEVEL ONE, WITH OPTIONS FOR DEEP THINKING, UPLOADING PICTURES, ETC

Messages of September 12, the latest day, the Twitter search interface was updated, the Twitter AI search entered the first level of the portal, and the relevant buttons were visible by clicking on the search box at the top of the first page. It is divided into three sections: deep thinking, uploading pictures, uploading files. In addition to the Deep Thinking option, which allows for the DeepSeek-R1 model or the Quest T1 model, the user can choose the quick answer option to provide the most common answer. The uploading of pictures or taking of photographs can be asked on the basis of the pictures and support for such functions as graphics, question-taking, search of goods, etc. Uploading a file or a public sign can summarize..- 2.7k

-

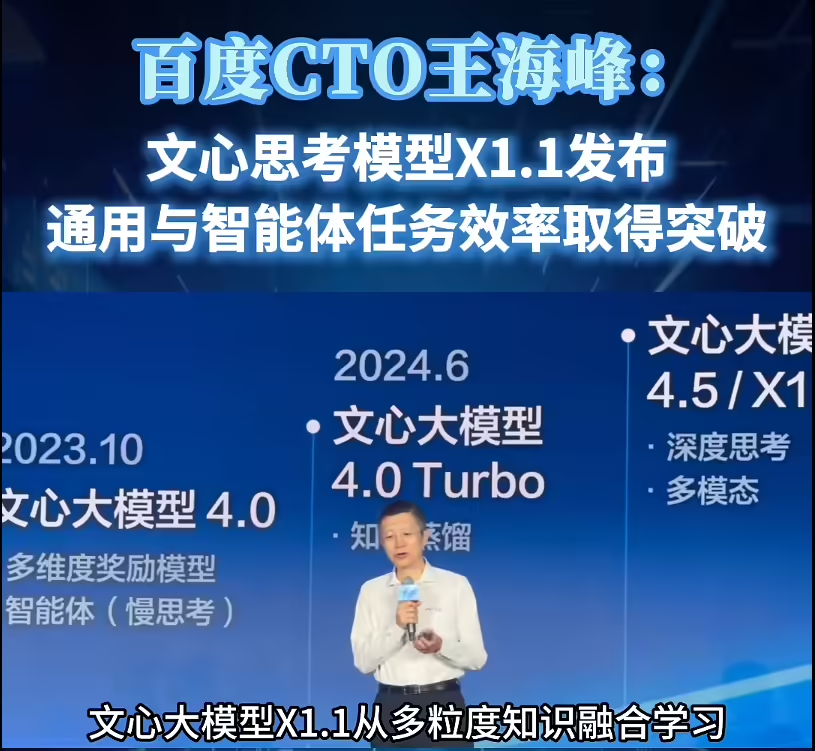

Baidu Releases ERNIE Model X1.1 DeepThinking Model, Overall Performance Surpasses DeepSeek R1

SEPTEMBER 9, NEWS. TODAY, THE WAVE SUMMIT IN-DEPTH LEARNING DEVELOPER CONFERENCE 2025, BEIJING. THE 100-DEGREE CHIEF TECHNICAL OFFICER AND DIRECTOR OF THE CENTRE FOR ADVANCED LEARNING TECHNOLOGY AND APPLIED NATIONAL ENGINEERING RESEARCH OFFICIALLY RELEASED THE MAGNA CARTA MODEL X1.1 DEEP THOUGHT MODEL. IT WAS DESCRIBED THAT THE X1-DEEP THINKING MODEL WAS BASED ON THE IN-DEEP THINKING MODEL BASED ON G4.5 TRAINING AND X1.1 WAS UPGRADED AGAIN. THE MODEL IS SIGNIFICANTLY ENHANCED IN TERMS OF DE FACTO, COMMAND COMPLIANCE, INTELLIGENCE, ETC. 1AI NOTES THAT USERS ARE ALREADY ABLE TO..- 3.7k

❯

Search

Scan to open current page

Top

Checking in, please wait

Click for today's check-in bonus!

You have earned {{mission.data.mission.credit}} points today!

My Coupons

-

¥CouponsLimitation of useExpired and UnavailableLimitation of use

before

Limitation of usePermanently validCoupon ID:×Available for the following products: Available for the following products categories: Unrestricted use:Available for all products and product types

No coupons available!

Unverify

Daily tasks completed: