-

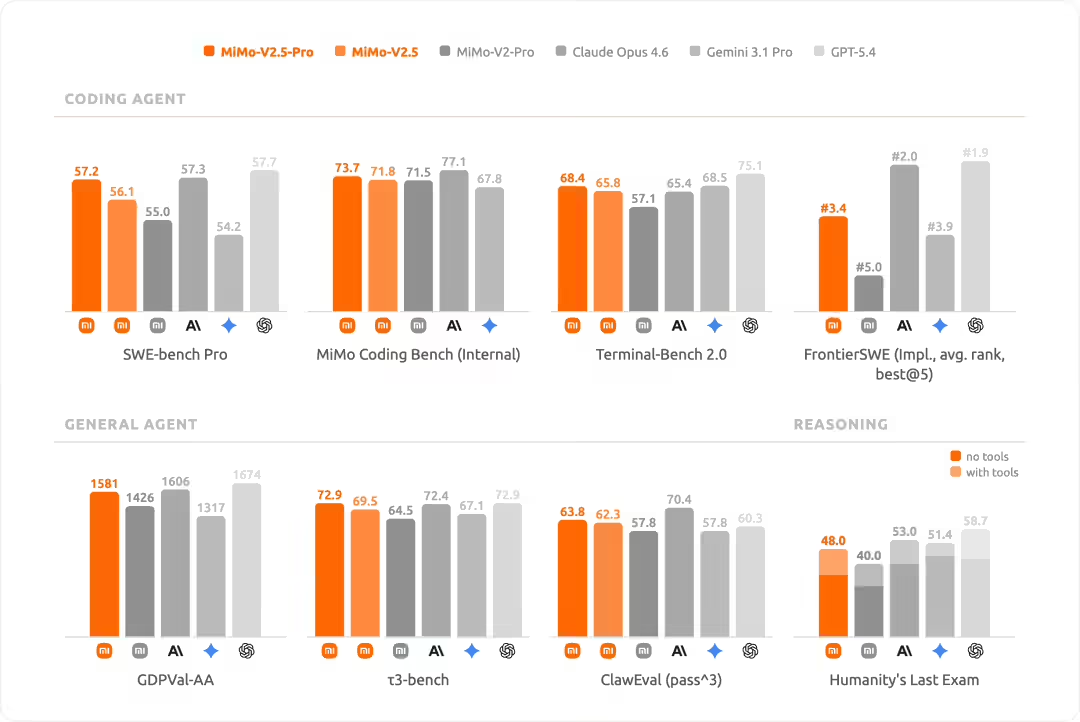

MiMo-V2.5 will open up and be suitable for almost all the chips in the country

On April 27th, according to blogger @foodshop researcher Will, on today's Mi Investor Day, the Vice-President of the Mi Group and Chairman of the Technical Committee Tsumi gave a speech on the theme “Agent-type remodeling of rice and human-carnage ecology”. The organizers summarized the core view as follows: Agent remodels the paradigms of millet and `human-carrying all-ecosystems' and spends more than $60 billion on AI over the next three years, a figure that is just below the bottom and higher; Mi has the full capacity of the IA age: the base, the data, the model, the frame and the ecological layer; the remodeling of one side..- 3.6k

-

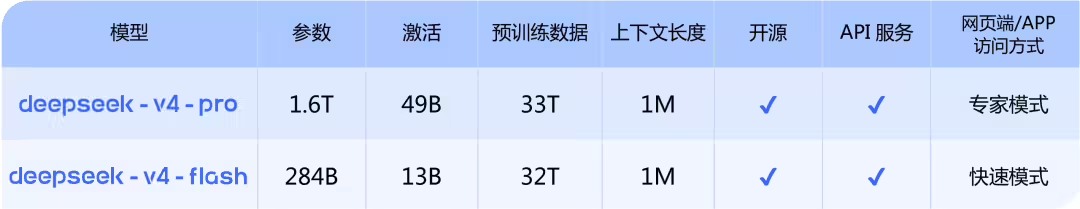

Into the millions of context inclusive age: DeepSeek-V4 model preview officially online and synchronized open source

Message from April 24, this morning, the DeepSeek-V4 model preview was officially online and synchronized with the open source. DeepSeek-V4 has a million-word super-long context, leading both domestic and open source areas in Agent capabilities, world knowledge and reasoning. The model is divided into two versions by size: the entry of the official network chat.deepseek.com or the official App will allow dialogue with the latest DeepSeek-V4 to explore the full new experience of 1M memory of the extra-long context. API Service Synchronized More..- 2.5k

-

Qwen3.6-27B Declares open source: 27 billion argument dense models with programming capabilities exceeding 15 times the size of MoE models

On April 23rd, the Ali Yuntung team announced yesterday that the open source model family had received new members — Qwen 3.6-27B. This is a dense, multi-modular model with 27 billion parameters and the highest community call for model specifications. This was followed by the release of Qwen3.6-Plus and Qwen3.6-35B-A3B, and this open-source version of 27B, while maintaining the advantages of a dense architecture, has resulted in a comprehensive upgrading of intelligent body programming and multimodular reasoning. According to official sources, Qwen 3.6-27B supports..- 2.4k

-

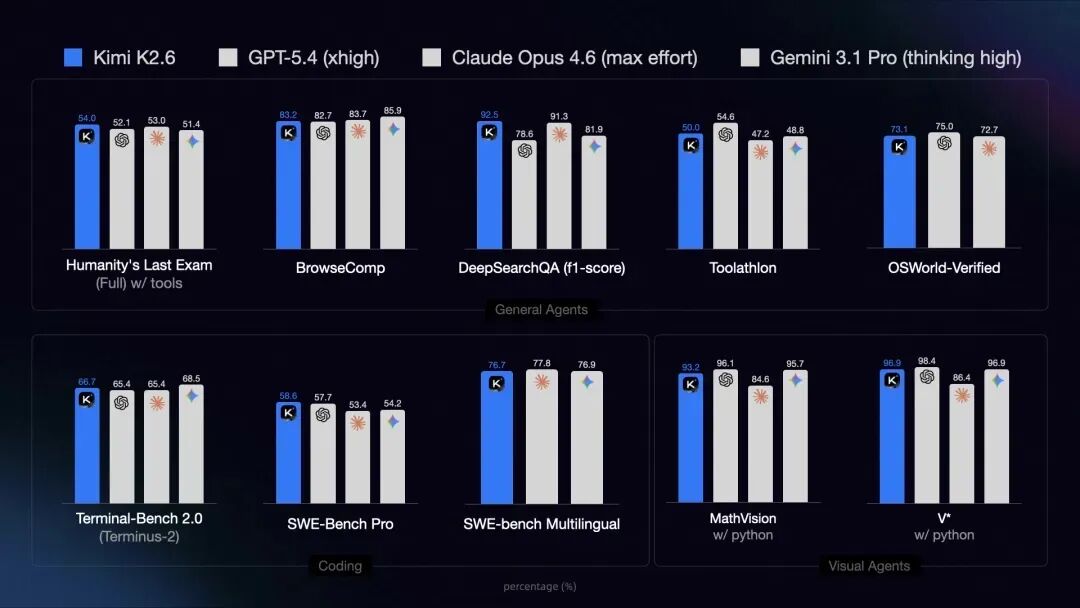

The most powerful model of the dark side of the moon, Kimi K2.6, release and open source, code capability against GPT-5.4

In April 21st, yesterday, the dark side of the moon officially released and opened a new model, Kimi K2.6, which focused on upgrading the code, AI intelligence and office capacity. (a) Stronger code capability: the internal benchmark test score is about 201 TP3T, which can be coded continuously for 13 hours and handles over 4000 line codes to support multiple languages such as Rust, Go, Python -

Hermes Agent: Free Open Source AI Smart Framework, the smarter the AI Agent

Hermes Agent is an open source AI Agent framework published by Nous Research, authorized by the MIT protocol. Unlike IDE's programming assistant or a single API chat robot packager, Hermes Agent is an autonomous intelligence deployed on a user-owned server with a permanent memory of a session and automatic skill generation capability - the framework automatically extracts the solution path as a reusable skill file, calls and continuously optimizes the next time you encounter a similar task, forming &qua..- 4.2k

-

Hermes Agent complete course, zero basic pedestal guide

Step 1: What is Hermes Agent? Hermes Agent is an open-source AI Agent developed by the Nous Research team. It's not like a regular chat robot (such as ChatGPT), it's going to: remember all your previous conversations (long-term memory) and learn from each mission, automatically create new "skills" to help you do real things: execute orders, browse web pages, write files, manage tasks, etc., support connections to Telegram, flying books, Discord, etc...- 5.4k

-

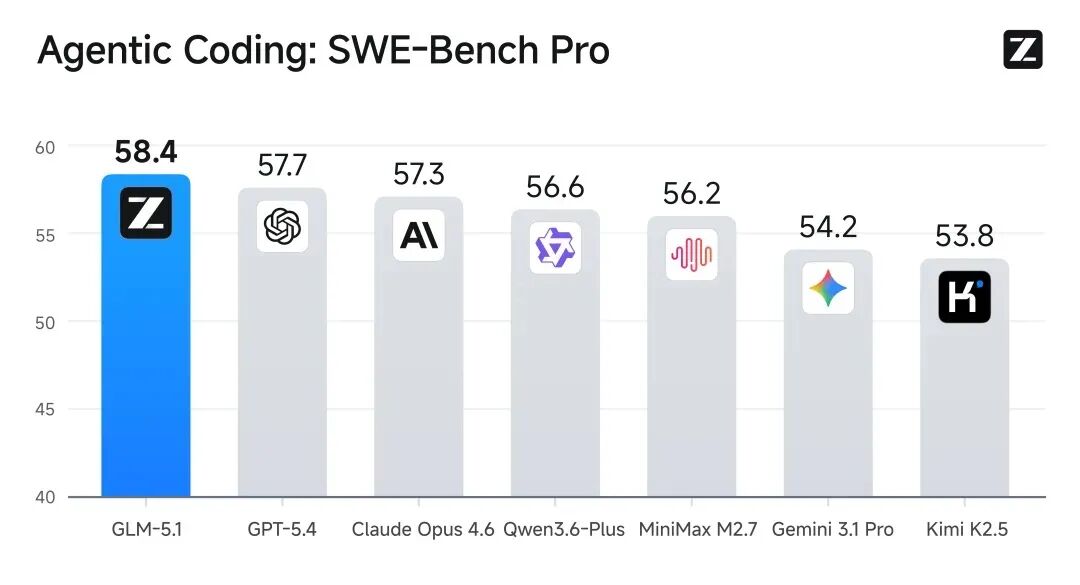

GLM-51 BEST PROGRAMMING MODEL BY THE GENDARMER

IN THE NEWS OF APRIL 9, YESTERDAY, ISP AI OFFICIALLY ANNOUNCED THE OPEN SOURCE FLAG-LEVEL AI SMART BODY ENGINEERING MODEL GLM-5.1 AND CONTINUES TO FOLLOW A LIBERAL MIT LICENSE TO SUPPORT PERSONAL AND COMMERCIAL USES. GLM-5.1 IS THE STRONGEST FLAGSHIP MODEL PUBLISHED SO FAR IN THE BRAIN SPECTRUM, WITH THE CORE DESIGN OBJECTIVE OF SUSTAINED AND EFFECTIVE IMPLEMENTATION OF AI SMART BODY TASKS OVER A LONGER PERIOD OF TIME. IT WAS DESCRIBED THAT GM-5.1 WAS ABLE TO WORK INDEPENDENTLY AND CONTINUOUSLY FOR MORE THAN EIGHT HOURS IN A SINGLE MISSION, DURING WHICH IT WAS AUTONOMOUS IN PLANNING, IMPLEMENTING, AND PLACING ITSELF IN AN ITERATIVE MANNER, LEADING TO THE DELIVERY OF COMPLETE ENGINEERING-LEVEL RESULTS. IN OFFICIAL..- 1.8k

-

Google Open Source Gemma 4 Series Model

In the morning of April 3, Google DeepMind released a new generation of open source model series Gemma 4 with a one-time rollout of four models covering the side to the workstation scene. E2B: 5.1 billion total parameters, 2.3 billion valid parameters, 128K context, officially stated as some equipment memory occupancy up to below 1.5GB; E4B: 8 billion total parameters, 4.5 billion valid parameters, 128K context, MMLLU Pro, 69.4%, close to previous generation 27B level; 26B A4B M..- 2.5k

-

Jellyfish: A one-stop AI tool to generate short dramas (demon screen shorts/ microsyncs) with one key to change the script to a spectroscopy

Jellyfish is a one-stop AI-generated comic video production tool that supports the automatic generation of spectroscopy, role, scene and full video from novel text. The overall location is the "AI Shorts Factory". Its core objective is to upgrade short-time production from a manual/semi-automatic mode to an industrial stream. Not only is the tool fully open-source, supporting local deployment and secondary development, but it also strikes at the technical level more accurately at the worst point of pain for the generation of AI video. The Jellyfish feature Enteres the script: Just provide text scripts in support of Chinese and English. Smart Mirror..- 7.4k

-

NemoClaw: Open-source AI Smart Platform, Open-source tool for deploying secure AI assistants

NVIDIA NemoClaw is an open source tool for deploying security AI assistants. It is installed in a single-key format to help users quickly build and run secure, autonomous AI assistants, which are used in various scenarios. NemoClaw has enhanced the security of AI assistants and simplified the deployment process. NemoClaw function One key secure deployment: Rapidly deploys a secure, continuous AI assistant by a single order. NemoClaw combines security and privacy controls to make it easier for developers to build and run AI assistants. Supporting Ren..- 2.2k

-

OpenAI free of charge to open-source project developers for six months ChatGPT Pro subscription without hard indicators such as Star number, monthly downloads

On March 7th, OpenAI announced today the Codex Open Source Scheme, which provides free-of-charge subscriptions to ChatGPT Pro for open source project maintainers/ developers. OpenAI states that open source maintainers have undertaken important work in the global software ecosystem in silence and that the Codex Open Source Fund has supported a number of projects requiring API over the past year totalling $1 million (note: the current exchange rate is approximately RMB 6917,000). At the same time, get a free CHATGPT Pro..- 1.6k

-

ALI CEO CONFIRMED LIN JOON-SOO'S DEPARTURE: THE OPEN SOURCE STRATEGY REMAINS UNCHANGED AND THE AI INPUT CONTINUES TO INCREASE

YESTERDAY, ARI BABA CEDO WU MIO SONG SENT AN IN-HOUSE E-MAIL TO ALL THE STAFF OF THE GENERAL INFORMATION LABORATORY, FORMALLY CONFIRMING THAT THE CHIEF OF TECHNICAL SERVICES, LIN JUN-HYUN, HAD LEFT. IN THE LETTER, WU YUI SONG ANNOUNCED THAT THE COMPANY WOULD SET UP A BASIC MODEL SUPPORT GROUP, WHICH WOULD BE CO-ORDINATED BY WU YUI MYUNG HIMSELF, THE HEAD OF THE GENERAL LABORATORY, ZHOU YAN MAN AND FAN XIAN, TO SUPPORT THE BUILDING OF THE BASIC MODEL. APPSO UNDERSTANDS THAT A NEW ROUND OF ORGANIZATIONAL STRATEGIC ADJUSTMENTS IS UNDER WAY WITHIN ALI, WITH A PLAN TO UPGRADE THE BASE MODEL AS A WHOLE, AND TO BRING IN THE TOP TECHNICAL SKILLS ON A LARGE SCALE- 1.1k

-

Ali Desktop Agent Tool Copaw Open source: Free access to local models, support nails, flying books, QQQ etc

On March 2nd, Aliyun announced today that the Agent tool Copaw will be officially open for secondary development based on Copaw, free access to local models, the preparation of Skills and access to proprietary message applications to meet more customized scene needs. It has been described that CoPaw Native Support for Chat Software and Platforms such as Nailing, Flying Book, QQ, Discord, iMessage, has multiple Skills, which can be deployed locally by a single key or a key cloud end through Aliyun's Calcator nest and the demonic community to create space.. -

NanoClaw: Open source lightweight personal AI assistant, safe OpenClaw replacement

NanoClaw is an open-source AI assistant, a lightweight alternative to OpenClaw, each Agent runs in a separate sandbox and only accesss a visible mounted directory. NanoClaw supports multi-channel access to whatsApp, Telegram, Discord and others, and pioneers the Agent Swams cluster collaboration capacity of personal AI assistants. NanoClaw abandons traditional configurations and users use natural language commands to make Claude Code change the source code directly to bespoke..- 4k

-

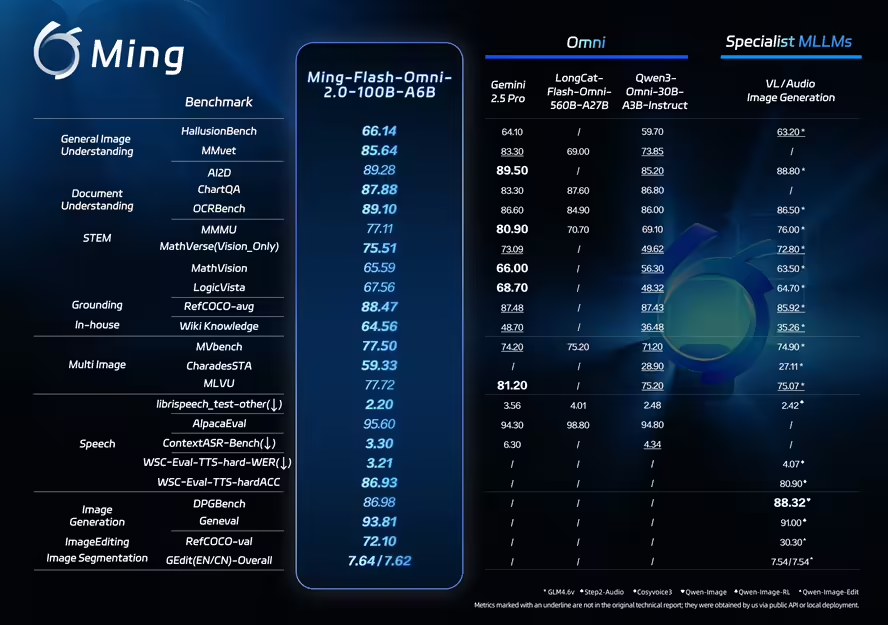

Ming-Flash-Omni 2.0 large and open-source model released by the ants group, more visible, better heard and more stable

On February 11, an ants group open source released a large full-mode model Ming-Flash-Omni 2.0. In a number of open benchmarking tests, the model has been prominent in key competencies such as visual language understanding, voice-controllable generation, image generation and editing. Ming-Flash-Omni 2.0 was described as the first industry-wide unified audio generation model to generate both voice, environmental sound and music in the same track. Users can exercise precision control over sound, speed, tone, volume, emotions and dialects by using only natural language instructions. Model.. -

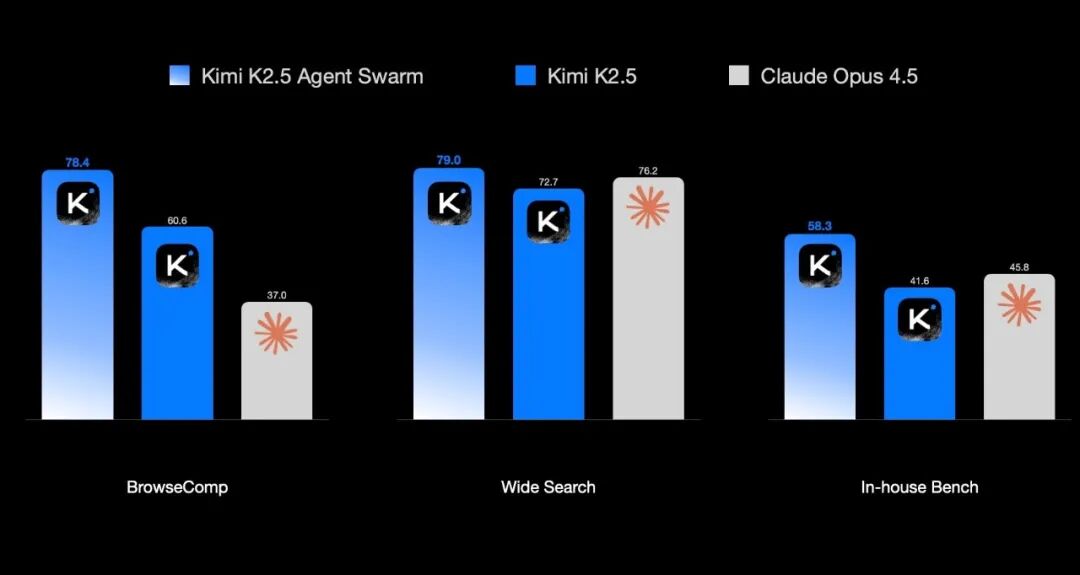

On the dark side of the moon, the strongest open source is launched

On January 28th, the dark side of the month officially launched the latest version of the flagship model, Kimi K2.5, to the public yesterday, to achieve a full upgrade in visual, multimodular understanding, code generation and intelligence capabilities. It was described that Kimi K2.5, using original multimodular structures, supported text, image and video input and was able to perform tasks such as image analysis, video analysis and visual programming. Official displays show that models can generate 3D models based on plans, re-engineer web interfaces from video and achieve higher accuracy path planning and visual debugging in image reasoning tasks..- 1.6k

-

Aliyun Open Source 6B Parameter Z-Image Base Model, Generating Photo Rejection of AI "People's Face"

On January 28th, Ali Yunyuan officially launched the Z-Image Base Model today, January 28th. The model is 6B in size, preserves the full weight distribution for the non-distillation base model, supports the CFG pilot mechanism, and provides a training base for fine-tuning missions such as LoRA, ControlNet. Z-Image claims to break the writing limits of a single dimension: whether it's phototolealism that pursues the shadows, or the dynamic and digital arts that have emotional tension, Z-Image captures and reconstructs every.. -

Clawdbot is here to install a 7x24-hour AI assistant

A recent open-source AI assistant, Clawdbot, is very hot on the offline: it can run on the server for 7 x 24 hours, and the user sends it a message through the instant communication platform, directing it to do all kinds of work. It's no longer useful to feel its ability, as illustrated by the fact that Clawdbot is better at "do it" than a normal AI chat robot, and in the case of the above, he did all the work of downloading the YouTube plugin. How can we have it? I. Requirements for the deployment of Clawdbot. 1, Telegram: This is the simplest, official push..- 7.3k

-

Clawdbot Installation and Introduction (Putting Hands New)

What is Clawdbot? Clawdbot is an open-source, self-hosted AI Assistant Framework maintained by community developers (web:clawd.bot), which allows you to integrate AI models (e.g., anthropic Claude, Openai GPT or other API-supported models) into chat applications. Through natural language conversations, you can get AI to execute server commands, read and write files, search the Internet, manage calendars, send mail, control other services, even access mobile cameras or push notifications..- 42.2k

-

Coderrr: A powerful open-source AI coding assistant CLI tool that prepares, debugs and publishes code

Coderrr is an open-source AI coding assistant designed to accelerate the development process. Through natural language descriptions, it generates codes, debugs and deploys, and applies to various development scenarios. Coderrr function AI driver code generation: Generate code for direct input production according to natural language description, and simplify the development process. Self-recovered error recovery: autoanalysing errors and retrying them using amendments to improve code quality and development efficiency. Multi-cycle dialogue iterative development: it is done through natural dialogue to make the development process more fluid and compatible with human thinking. Code library smart understanding:..- 1.7k

-

MASK DECLARED OPEN SOURCE X, NEW RECOMMENDED ALGORITHM: FULL DISCLOSURE OF CORE CODE

In the news of January 21, yesterday, Iron Mask announced that X had officially opened a new recommended algorithm and simultaneously made public its complete code warehouse on GitHub. The X Engineering Team wrote yesterday that the new algorithm is based on the same transform structure as the xAI 's Grok model, covering all core logic recommended by the platform for determining "natural content" and "advertising content." Mask added that X would update the algorithm every four weeks in the future, with a developer's note, so that the outside world could understand the changes in the referral mechanism. He's..- 1.4k

-

BEFORE GOOGLE CEO SCHMIDT: EUROPE EITHER INVESTS IN OPEN SOURCE AI OR DEPENDS ON THE CHINESE MODEL

On January 21st, according to Bloomberg, prior to Google CEO, Eric Schmidt, a technology investor, stated on Tuesday that Europe must invest in building its own open-source AI laboratory and solve the problem of soaring energy prices, otherwise it would soon find itself dependent on China’s model. Schmitt said on Tuesday at the World Economic Forum in Davos: “In the United States, businesses are largely turning to closed sources, which means that these technologies will be purchased, authorized, etc. At the same time, China ' s approach is largely open-minded and open-source. Unless Europe is willing for Europe..- 6.1k

-

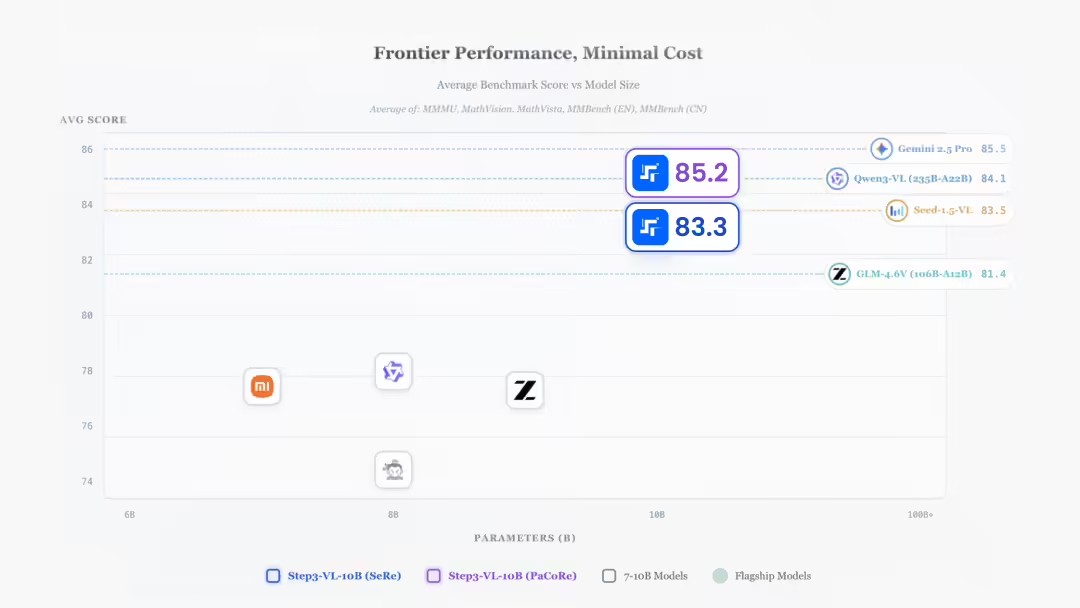

Step3-VL-10B, performance equivalent to a hundred billion-scale large model

On January 21st, Step3-VL-10B open source for step-to-step stars. It was described that with only 10B parameters, Step3-VL-10B had reached the same scale SOTA in a series of benchmark tests such as visual perception, logical reasoning, mathematical competitions and general dialogue. 1AI with the text of the official presentation as follows: Only 10B parameter, Step3-VL-10B in visual perception,..- 1.2k

-

Genre GLM-4.7-Flash model release and open source, free of charge

On January 20, the IQ-GLM-4.7-Flash model was officially released and opened today, January 20th. GLM-4.7-Flash is a hybrid thinking model with a total parameter of 30B and a activated parameter of 3B, claiming to be a homogenous SOTA model, providing a new option for light quantification deployments that takes into account performance and efficiency. As of this date, the GLM-4.7-Flash will replace the GLM-4.5-Flash, go online on the open-think platform BigModel.cn and be available for free call..- 2.1k

❯

Search

Scan to open current page

Top

Checking in, please wait

Click for today's check-in bonus!

You have earned {{mission.data.mission.credit}} points today!

My Coupons

-

¥CouponsLimitation of useExpired and UnavailableLimitation of use

before

Limitation of usePermanently validCoupon ID:×Available for the following products: Available for the following products categories: Unrestricted use:Available for all products and product types

No coupons available!

Unverify

Daily tasks completed: